Ayana

Neural Headline Generation with Sentence-wise Optimization

Oct 09, 2016

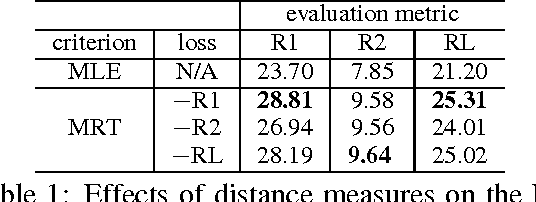

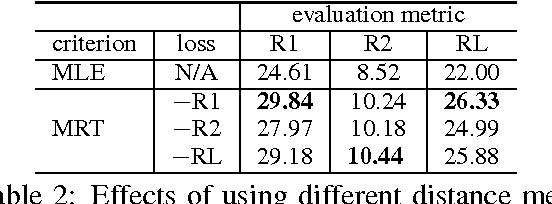

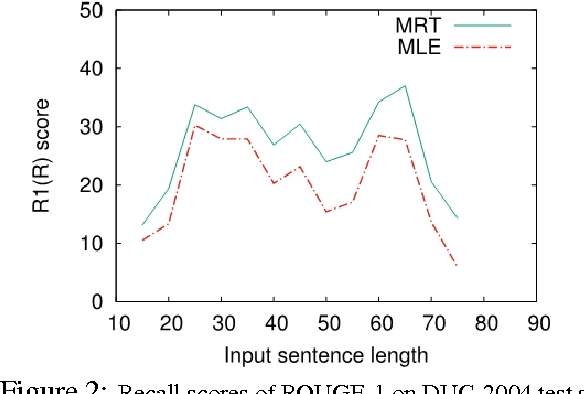

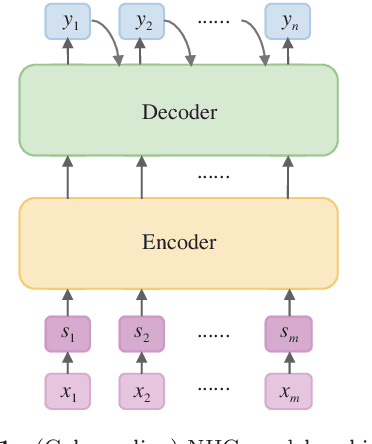

Abstract:Recently, neural models have been proposed for headline generation by learning to map documents to headlines with recurrent neural networks. Nevertheless, as traditional neural network utilizes maximum likelihood estimation for parameter optimization, it essentially constrains the expected training objective within word level rather than sentence level. Moreover, the performance of model prediction significantly relies on training data distribution. To overcome these drawbacks, we employ minimum risk training strategy in this paper, which directly optimizes model parameters in sentence level with respect to evaluation metrics and leads to significant improvements for headline generation. Experiment results show that our models outperforms state-of-the-art systems on both English and Chinese headline generation tasks.

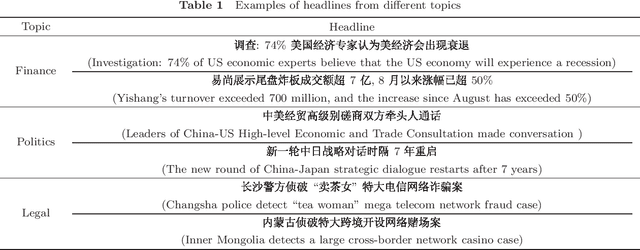

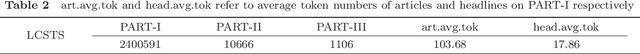

Topic Sensitive Neural Headline Generation

Aug 20, 2016

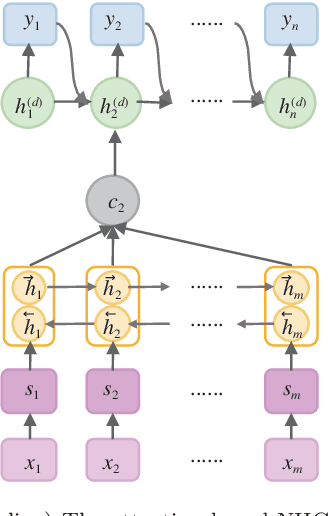

Abstract:Neural models have recently been used in text summarization including headline generation. The model can be trained using a set of document-headline pairs. However, the model does not explicitly consider topical similarities and differences of documents. We suggest to categorizing documents into various topics so that documents within the same topic are similar in content and share similar summarization patterns. Taking advantage of topic information of documents, we propose topic sensitive neural headline generation model. Our model can generate more accurate summaries guided by document topics. We test our model on LCSTS dataset, and experiments show that our method outperforms other baselines on each topic and achieves the state-of-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge