Aviad Zabatani

SimuGAN: Unsupervised forward modeling and optimal design of a LIDAR Camera

Dec 16, 2020

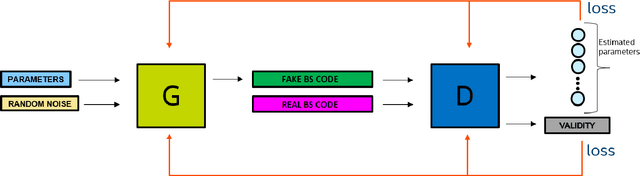

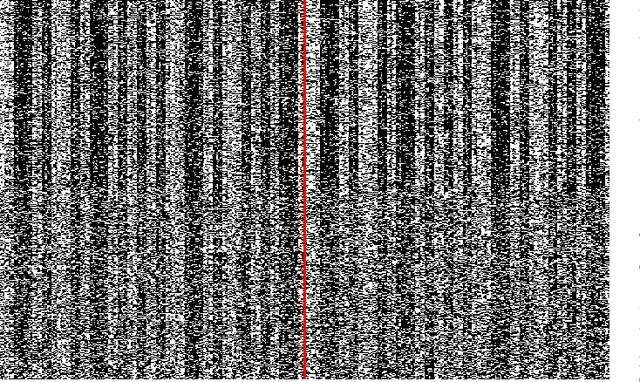

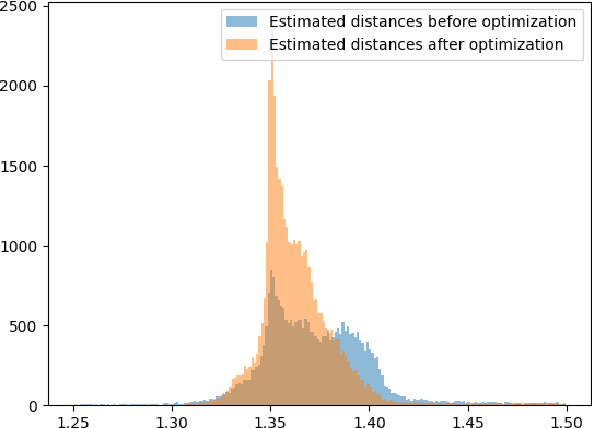

Abstract:Energy-saving LIDAR camera for short distances estimates an object's distance using temporally intensity-coded laser light pulses and calculates the maximum correlation with the back-scattered pulse. Though on low power, the backs-scattered pulse is noisy and unstable, which leads to inaccurate and unreliable depth estimation. To address this problem, we use GANs (Generative Adversarial Networks), which are two neural networks that can learn complicated class distributions through an adversarial process. We learn the LIDAR camera's hidden properties and behavior, creating a novel, fully unsupervised forward model that simulates the camera. Then, we use the model's differentiability to explore the camera parameter space and optimize those parameters in terms of depth, accuracy, and stability. To achieve this goal, we also propose a new custom loss function designated to the back-scattered code distribution's weaknesses and its circular behavior. The results are demonstrated on both synthetic and real data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge