Ashraf Matrawy

LLMs' Suitability for Network Security: A Case Study of STRIDE Threat Modeling

May 07, 2025Abstract:Artificial Intelligence (AI) is expected to be an integral part of next-generation AI-native 6G networks. With the prevalence of AI, researchers have identified numerous use cases of AI in network security. However, there are almost nonexistent studies that analyze the suitability of Large Language Models (LLMs) in network security. To fill this gap, we examine the suitability of LLMs in network security, particularly with the case study of STRIDE threat modeling. We utilize four prompting techniques with five LLMs to perform STRIDE classification of 5G threats. From our evaluation results, we point out key findings and detailed insights along with the explanation of the possible underlying factors influencing the behavior of LLMs in the modeling of certain threats. The numerical results and the insights support the necessity for adjusting and fine-tuning LLMs for network security use cases.

Introducing Perturb-ability Score (PS) to Enhance Robustness Against Evasion Adversarial Attacks on ML-NIDS

Sep 11, 2024

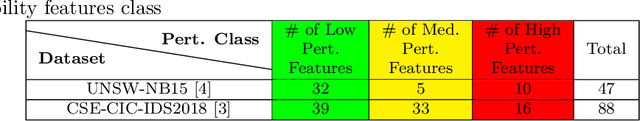

Abstract:This paper proposes a novel Perturb-ability Score (PS) that can be used to identify Network Intrusion Detection Systems (NIDS) features that can be easily manipulated by attackers in the problem-space. We demonstrate that using PS to select only non-perturb-able features for ML-based NIDS maintains detection performance while enhancing robustness against adversarial attacks.

Introducing Adaptive Continuous Adversarial Training (ACAT) to Enhance ML Robustness

Mar 15, 2024Abstract:Machine Learning (ML) is susceptible to adversarial attacks that aim to trick ML models, making them produce faulty predictions. Adversarial training was found to increase the robustness of ML models against these attacks. However, in network and cybersecurity, obtaining labeled training and adversarial training data is challenging and costly. Furthermore, concept drift deepens the challenge, particularly in dynamic domains like network and cybersecurity, and requires various models to conduct periodic retraining. This letter introduces Adaptive Continuous Adversarial Training (ACAT) to continuously integrate adversarial training samples into the model during ongoing learning sessions, using real-world detected adversarial data, to enhance model resilience against evolving adversarial threats. ACAT is an adaptive defense mechanism that utilizes periodic retraining to effectively counter adversarial attacks while mitigating catastrophic forgetting. Our approach also reduces the total time required for adversarial sample detection, especially in environments such as network security where the rate of attacks could be very high. Traditional detection processes that involve two stages may result in lengthy procedures. Experimental results using a SPAM detection dataset demonstrate that with ACAT, the accuracy of the SPAM filter increased from 69% to over 88% after just three retraining sessions. Furthermore, ACAT outperforms conventional adversarial sample detectors, providing faster decision times, up to four times faster in some cases.

Adversarial Evasion Attacks Practicality in Networks: Testing the Impact of Dynamic Learning

Jun 08, 2023Abstract:Machine Learning (ML) has become ubiquitous, and its deployment in Network Intrusion Detection Systems (NIDS) is inevitable due to its automated nature and high accuracy in processing and classifying large volumes of data. However, ML has been found to have several flaws, on top of them are adversarial attacks, which aim to trick ML models into producing faulty predictions. While most adversarial attack research focuses on computer vision datasets, recent studies have explored the practicality of such attacks against ML-based network security entities, especially NIDS. This paper presents two distinct contributions: a taxonomy of practicality issues associated with adversarial attacks against ML-based NIDS and an investigation of the impact of continuous training on adversarial attacks against NIDS. Our experiments indicate that continuous re-training, even without adversarial training, can reduce the effect of adversarial attacks. While adversarial attacks can harm ML-based NIDSs, our aim is to highlight that there is a significant gap between research and real-world practicality in this domain which requires attention.

Evaluating Resilience of Encrypted Traffic Classification Against Adversarial Evasion Attacks

May 30, 2021

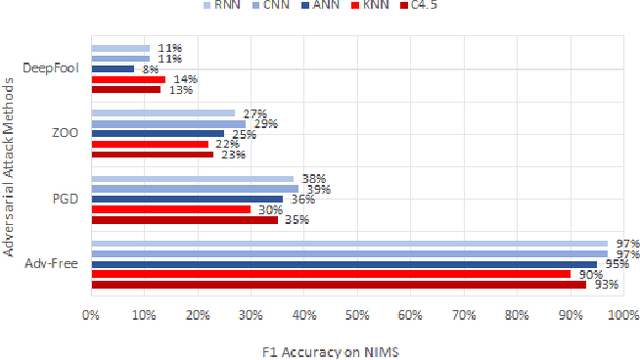

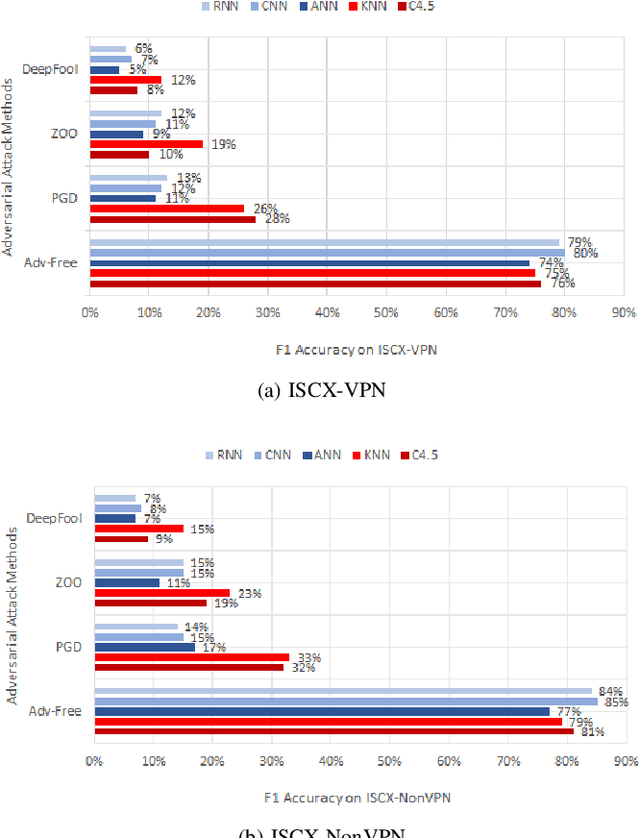

Abstract:Machine learning and deep learning algorithms can be used to classify encrypted Internet traffic. Classification of encrypted traffic can become more challenging in the presence of adversarial attacks that target the learning algorithms. In this paper, we focus on investigating the effectiveness of different evasion attacks and see how resilient machine and deep learning algorithms are. Namely, we test C4.5 Decision Tree, K-Nearest Neighbor (KNN), Artificial Neural Network (ANN), Convolutional Neural Networks (CNN) and Recurrent Neural Networks (RNN). In most of our experimental results, deep learning shows better resilience against the adversarial samples in comparison to machine learning. Whereas, the impact of the attack varies depending on the type of attack.

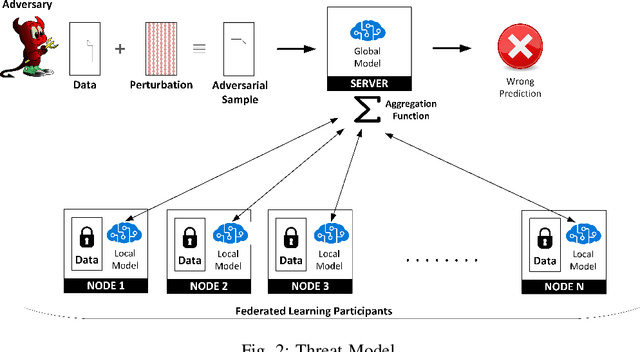

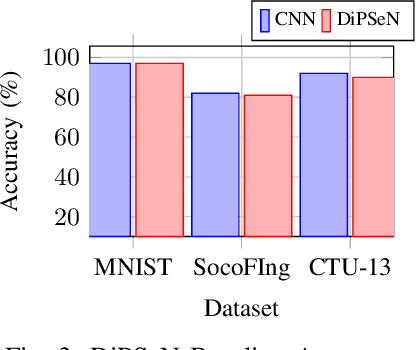

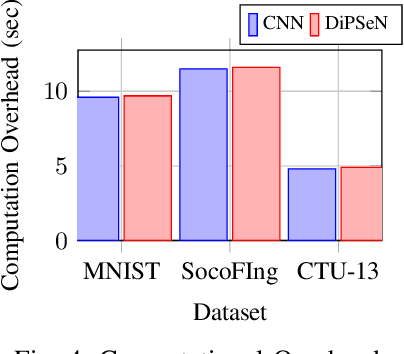

DiPSeN: Differentially Private Self-normalizing Neural Networks For Adversarial Robustness in Federated Learning

Jan 08, 2021

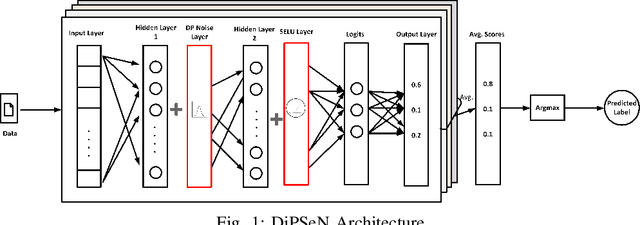

Abstract:The need for robust, secure and private machine learning is an important goal for realizing the full potential of the Internet of Things (IoT). Federated learning has proven to help protect against privacy violations and information leakage. However, it introduces new risk vectors which make machine learning models more difficult to defend against adversarial samples. In this study, we examine the role of differential privacy and self-normalization in mitigating the risk of adversarial samples specifically in a federated learning environment. We introduce DiPSeN, a Differentially Private Self-normalizing Neural Network which combines elements of differential privacy noise with self-normalizing techniques. Our empirical results on three publicly available datasets show that DiPSeN successfully improves the adversarial robustness of a deep learning classifier in a federated learning environment based on several evaluation metrics.

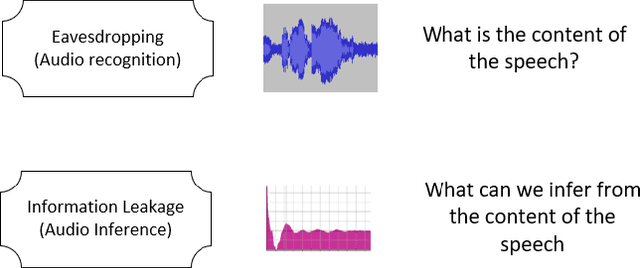

A GAN-based Approach for Mitigating Inference Attacks in Smart Home Environment

Nov 13, 2020

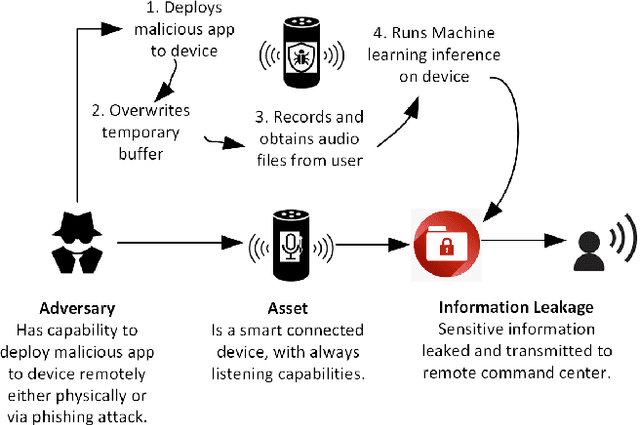

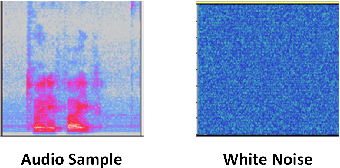

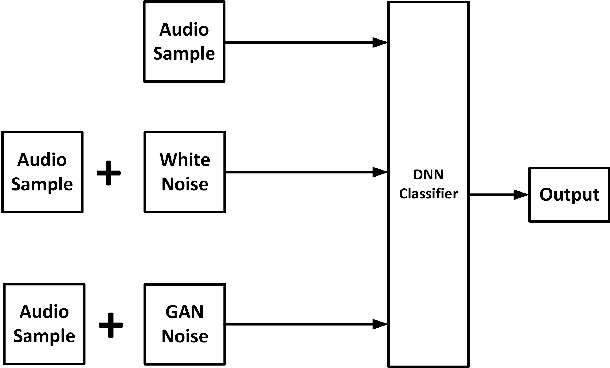

Abstract:The proliferation of smart, connected, always listening devices have introduced significant privacy risks to users in a smart home environment. Beyond the notable risk of eavesdropping, intruders can adopt machine learning techniques to infer sensitive information from audio recordings on these devices, resulting in a new dimension of privacy concerns and attack variables to smart home users. Techniques such as sound masking and microphone jamming have been effectively used to prevent eavesdroppers from listening in to private conversations. In this study, we explore the problem of adversaries spying on smart home users to infer sensitive information with the aid of machine learning techniques. We then analyze the role of randomness in the effectiveness of sound masking for mitigating sensitive information leakage. We propose a Generative Adversarial Network (GAN) based approach for privacy preservation in smart homes which generates random noise to distort the unwanted machine learning-based inference. Our experimental results demonstrate that GANs can be used to generate more effective sound masking noise signals which exhibit more randomness and effectively mitigate deep learning-based inference attacks while preserving the semantics of the audio samples.

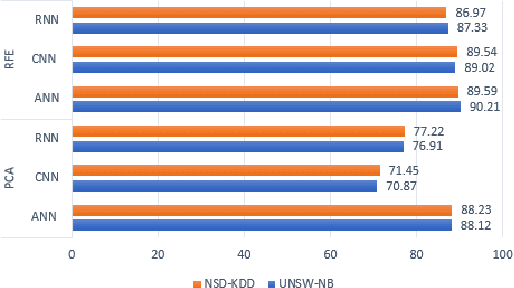

Evaluation of Adversarial Training on Different Types of Neural Networks in Deep Learning-based IDSs

Jul 08, 2020

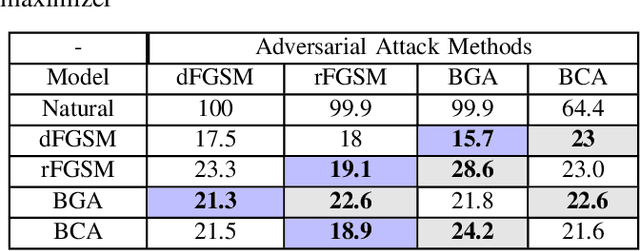

Abstract:Network security applications, including intrusion detection systems of deep neural networks, are increasing rapidly to make detection task of anomaly activities more accurate and robust. With the rapid increase of using DNN and the volume of data traveling through systems, different growing types of adversarial attacks to defeat them create a severe challenge. In this paper, we focus on investigating the effectiveness of different evasion attacks and how to train a resilience deep learning-based IDS using different Neural networks, e.g., convolutional neural networks (CNN) and recurrent neural networks (RNN). We use the min-max approach to formulate the problem of training robust IDS against adversarial examples using two benchmark datasets. Our experiments on different deep learning algorithms and different benchmark datasets demonstrate that defense using an adversarial training-based min-max approach improves the robustness against the five well-known adversarial attack methods.

The Threat of Adversarial Attacks on Machine Learning in Network Security -- A Survey

Nov 06, 2019

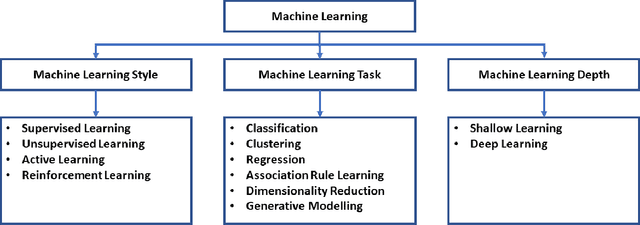

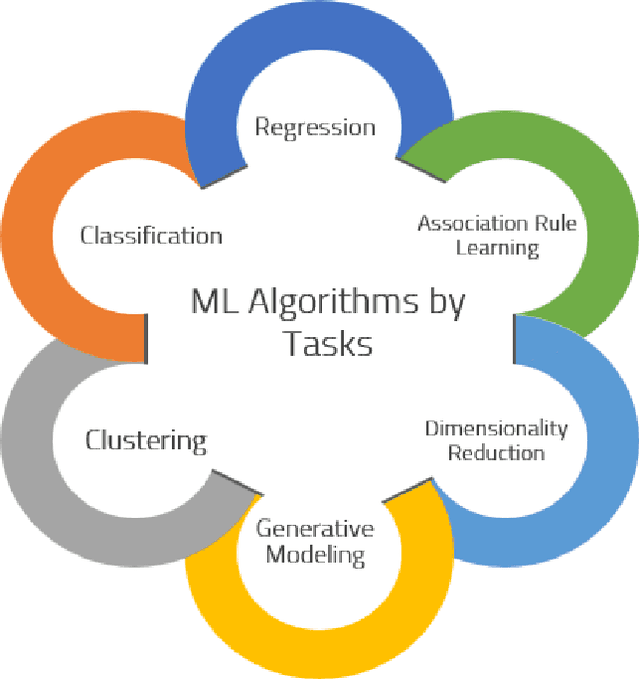

Abstract:Machine learning models have made many decision support systems to be faster, more accurate and more efficient. However, applications of machine learning in network security face more disproportionate threat of active adversarial attacks compared to other domains. This is because machine learning applications in network security such as malware detection, intrusion detection, and spam filtering are by themselves adversarial in nature. In what could be considered an arms race between attackers and defenders, adversaries constantly probe machine learning systems with inputs which are explicitly designed to bypass the system and induce a wrong prediction. In this survey, we first provide a taxonomy of machine learning techniques, styles, and algorithms. We then introduce a classification of machine learning in network security applications. Next, we examine various adversarial attacks against machine learning in network security and introduce two classification approaches for adversarial attacks in network security. First, we classify adversarial attacks in network security based on a taxonomy of network security applications. Secondly, we categorize adversarial attacks in network security into a problem space vs. feature space dimensional classification model. We then analyze the various defenses against adversarial attacks on machine learning-based network security applications. We conclude by introducing an adversarial risk model and evaluate several existing adversarial attacks against machine learning in network security using the risk model. We also identify where each attack classification resides within the adversarial risk model

Investigating Resistance of Deep Learning-based IDS against Adversaries using min-max Optimization

Oct 30, 2019

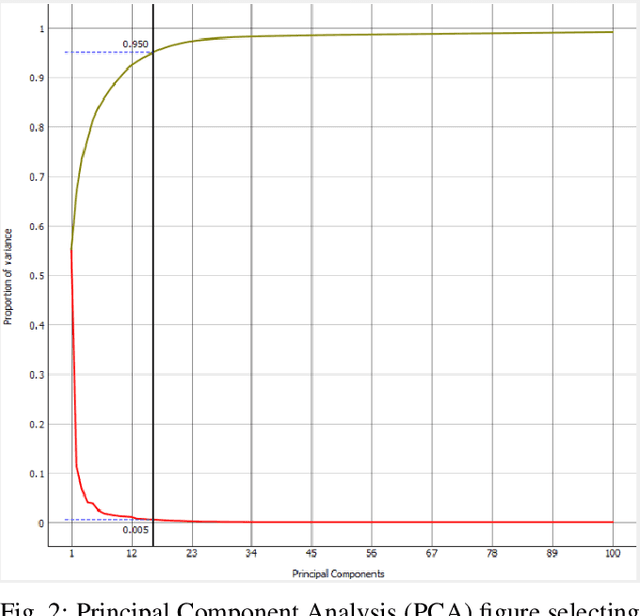

Abstract:With the growth of adversarial attacks against machine learning models, several concerns have emerged about potential vulnerabilities in designing deep neural network-based intrusion detection systems (IDS). In this paper, we study the resilience of deep learning-based intrusion detection systems against adversarial attacks. We apply the min-max (or saddle-point) approach to train intrusion detection systems against adversarial attack samples in NSW-NB 15 dataset. We have the max approach for generating adversarial samples that achieves maximum loss and attack deep neural networks. On the other side, we utilize the existing min approach [2] [9] as a defense strategy to optimize intrusion detection systems that minimize the loss of the incorporated adversarial samples during the adversarial training. We study and measure the effectiveness of the adversarial attack methods as well as the resistance of the adversarially trained models against such attacks. We find that the adversarial attack methods that were designed in binary domains can be used in continuous domains and exhibit different misclassification levels. We finally show that principal component analysis (PCA) based feature reduction can boost the robustness in intrusion detection system (IDS) using a deep neural network (DNN).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge