Arash Kalatian

Multi-task Recurrent Neural Networks to Simultaneously Infer Mode and Purpose in GPS Trajectories

Oct 23, 2021

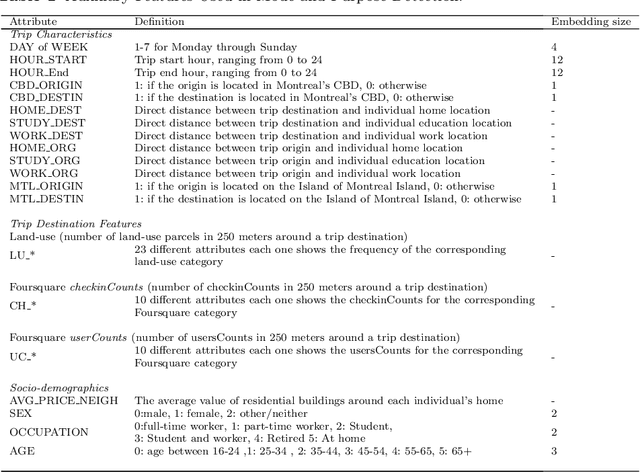

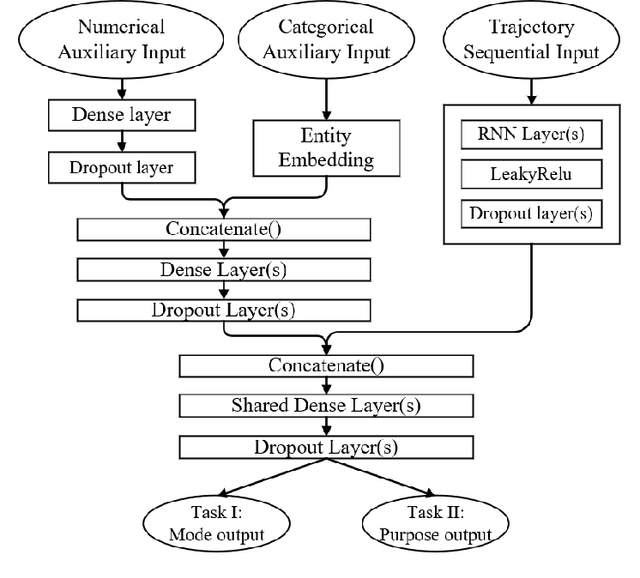

Abstract:Multi-task learning is assumed as a powerful inference method, specifically, where there is a considerable correlation between multiple tasks, predicting them in an unique framework may enhance prediction results. This research challenges this assumption by developing several single-task models to compare their results against multi-task learners to infer mode and purpose of trip from smartphone travel survey data collected as part of a smartphone-based travel survey. GPS trajectory data along with socio-demographics and destination-related characteristics are fed into a multi-input neural network framework to predict two outputs; mode and purpose. We deployed Recurrent Neural Networks (RNN) that are fed by sequential GPS trajectories. To process the socio-demographics and destination-related characteristics, another neural network, with different embedding and dense layers is used in parallel with RNN layers in a multi-input multi-output framework. The results are compared against the single-task learners that classify mode and purpose independently. We also investigate different RNN approaches such as Long-Short Term Memory (LSTM), Gated Recurrent Units (GRU) and Bi-directional Gated Recurrent Units (Bi-GRU). The best multi-task learner was a Bi-GRU model able to classify mode and purpose with an F1-measures of 84.33% and 78.28%, while the best single-task learner to infer mode of transport was a GRU model that achieved an F1-measure of 86.50%, and the best single-task Bi-GRU purpose detection model that reached an F1-measure of 77.38%. While there's an assumption of higher performance of multi-task over sing-task learners, the results of this study does not hold such an assumption and shows, in the context of mode and trip purpose inference from GPS trajectory data, a multi-task learning approach does not bring any considerable advantage over single-task learners.

Decoding pedestrian and automated vehicle interactions using immersive virtual reality and interpretable deep learning

Feb 18, 2020

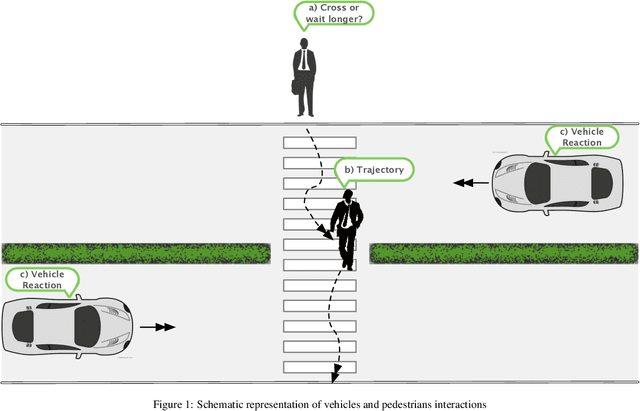

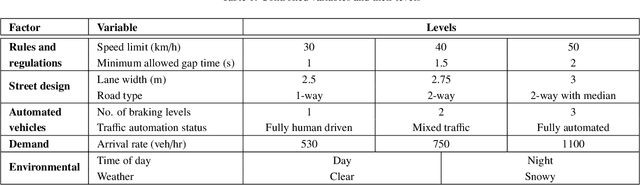

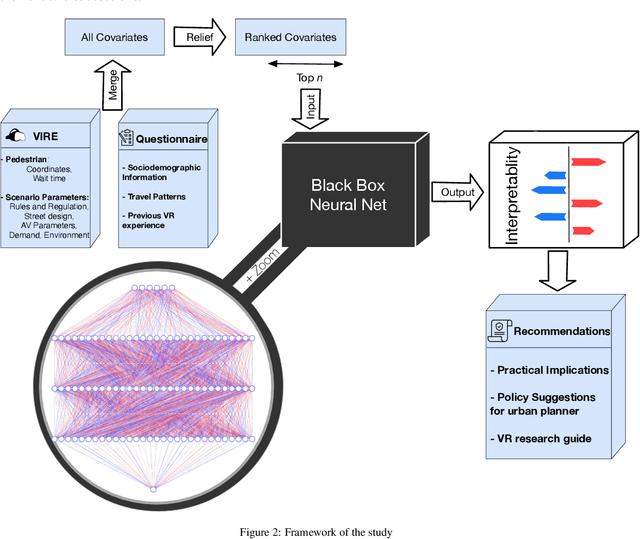

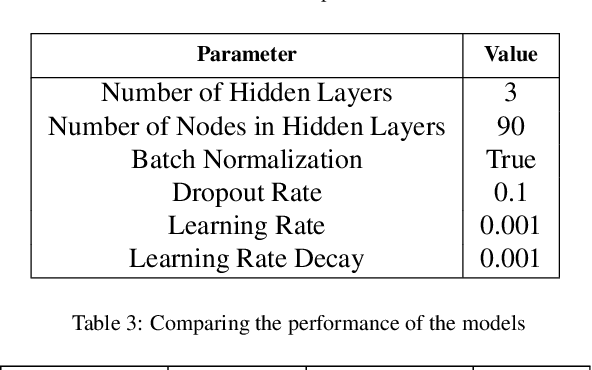

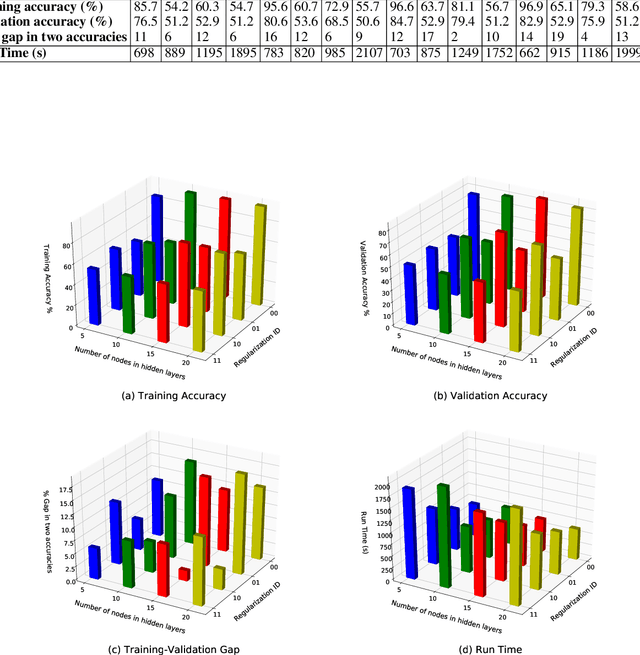

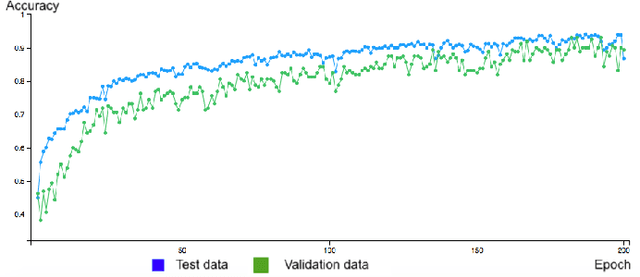

Abstract:To ensure pedestrian friendly streets in the era of automated vehicles, reassessment of current policies, practices, design, rules and regulations of urban areas is of importance. This study investigates pedestrian crossing behaviour, as an important element of urban dynamics that is expected to be affected by the presence of automated vehicles. For this purpose, an interpretable machine learning framework is proposed to explore factors affecting pedestrians' wait time before crossing mid-block crosswalks in the presence of automated vehicles. To collect rich behavioural data, we developed a dynamic and immersive virtual reality experiment, with 180 participants from a heterogeneous population in 4 different locations in the Greater Toronto Area (GTA). Pedestrian wait time behaviour is then analyzed using a data-driven Cox Proportional Hazards (CPH) model, in which the linear combination of the covariates is replaced by a flexible non-linear deep neural network. The proposed model achieved a 5% improvement in goodness of fit, but more importantly, enabled us to incorporate a richer set of covariates. A game theoretic based interpretability method is used to understand the contribution of different covariates to the time pedestrians wait before crossing. Results show that the presence of automated vehicles on roads, wider lane widths, high density on roads, limited sight distance, and lack of walking habits are the main contributing factors to longer wait times. Our study suggested that, to move towards pedestrian-friendly urban areas, national level educational programs for children, enhanced safety measures for seniors, promotion of active modes of transportation, and revised traffic rules and regulations should be considered.

DeepSurvival: Pedestrian Wait Time Estimation in Mixed Traffic Conditions Using Deep Survival Analysis

Apr 16, 2019

Abstract:Pedestrian's road crossing behaviour is one of the important aspects of urban dynamics that will be affected by the introduction of autonomous vehicles. In this study we introduce DeepSurvival, a novel framework for estimating pedestrian's waiting time at unsignalized mid-block crosswalks in mixed traffic conditions. We exploit the strengths of deep learning in capturing the nonlinearities in the data and develop a cox proportional hazard model with a deep neural network as the log-risk function. An embedded feature selection algorithm for reducing data dimensionality and enhancing the interpretability of the network is also developed. We test our framework on a dataset collected from 160 participants using an immersive virtual reality environment. Validation results showed that with a C-index of 0.64 our proposed framework outperformed the standard cox proportional hazard-based model with a C-index of 0.58.

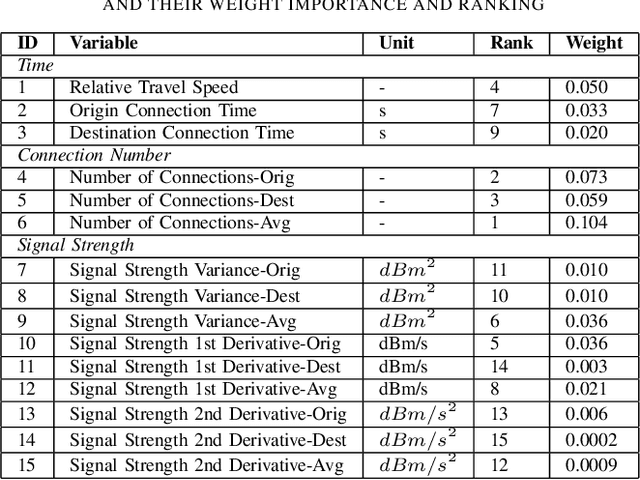

A semi-supervised deep residual network for mode detection in Wi-Fi signals

Feb 17, 2019

Abstract:Due to their ubiquitous and pervasive nature, Wi-Fi networks have the potential to collect large-scale, low-cost, and disaggregate data on multimodal transportation. In this study, we develop a semi-supervised deep residual network (ResNet) framework to utilize Wi-Fi communications obtained from smartphones for the purpose of transportation mode detection. This framework is evaluated on data collected by Wi-Fi sensors located in a congested urban area in downtown Toronto. To tackle the intrinsic difficulties and costs associated with labelled data collection, we utilize ample amount of easily collected low-cost unlabelled data by implementing the semi-supervised part of the framework. By incorporating a ResNet architecture as the core of the framework, we take advantage of the high-level features not considered in the traditional machine learning frameworks. The proposed framework shows a promising performance on the collected data, with a prediction accuracy of 81.8% for walking, 82.5% for biking and 86.0% for the driving mode.

Mobility Mode Detection Using WiFi Signals

Sep 16, 2018

Abstract:We utilize Wi-Fi communications from smartphones to predict their mobility mode, i.e. walking, biking and driving. Wi-Fi sensors were deployed at four strategic locations in a closed loop on streets in downtown Toronto. Deep neural network (Multilayer Perceptron) along with three decision tree based classifiers (Decision Tree, Bagged Decision Tree and Random Forest) are developed. Results show that the best prediction accuracy is achieved by Multilayer Perceptron, with 86.52% correct predictions of mobility modes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge