Andres Upegui

A unified software/hardware scalable architecture for brain-inspired computing based on self-organizing neural models

Jan 06, 2022

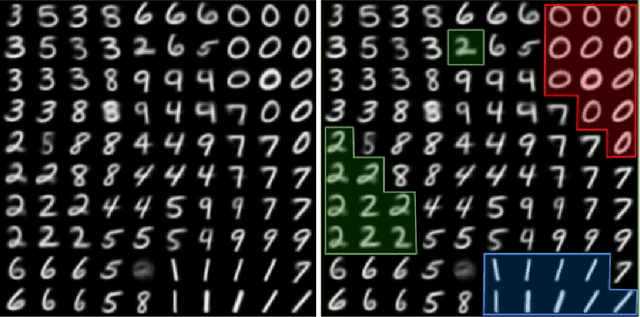

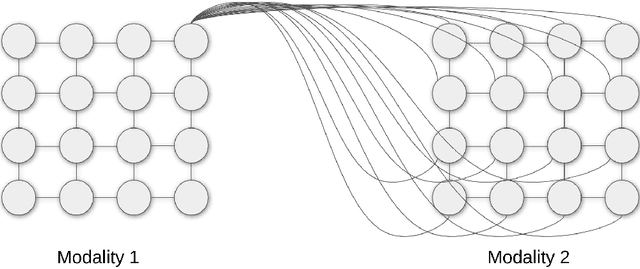

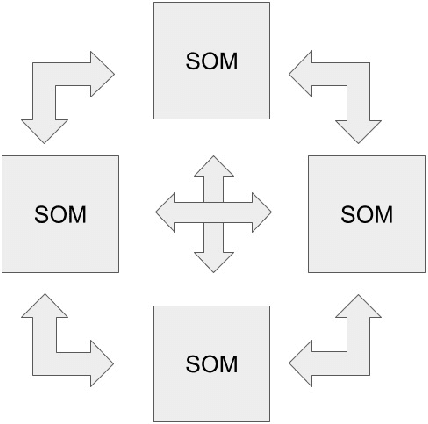

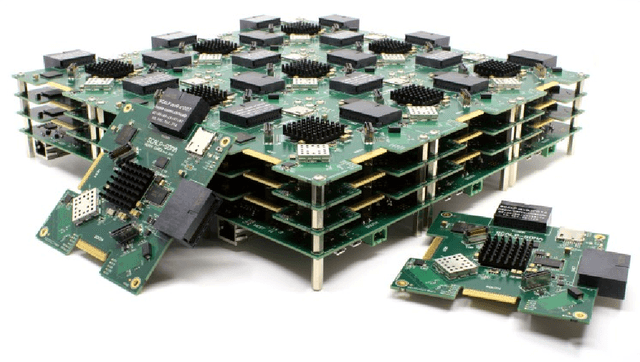

Abstract:The field of artificial intelligence has significantly advanced over the past decades, inspired by discoveries from the fields of biology and neuroscience. The idea of this work is inspired by the process of self-organization of cortical areas in the human brain from both afferent and lateral/internal connections. In this work, we develop an original brain-inspired neural model associating Self-Organizing Maps (SOM) and Hebbian learning in the Reentrant SOM (ReSOM) model. The framework is applied to multimodal classification problems. Compared to existing methods based on unsupervised learning with post-labeling, the model enhances the state-of-the-art results. This work also demonstrates the distributed and scalable nature of the model through both simulation results and hardware execution on a dedicated FPGA-based platform named SCALP (Self-configurable 3D Cellular Adaptive Platform). SCALP boards can be interconnected in a modular way to support the structure of the neural model. Such a unified software and hardware approach enables the processing to be scaled and allows information from several modalities to be merged dynamically. The deployment on hardware boards provides performance results of parallel execution on several devices, with the communication between each board through dedicated serial links. The proposed unified architecture, composed of the ReSOM model and the SCALP hardware platform, demonstrates a significant increase in accuracy thanks to multimodal association, and a good trade-off between latency and power consumption compared to a centralized GPU implementation.

Neuromorphic hardware as a self-organizing computing system

Oct 30, 2018

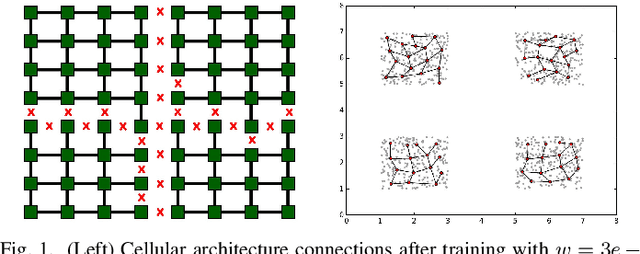

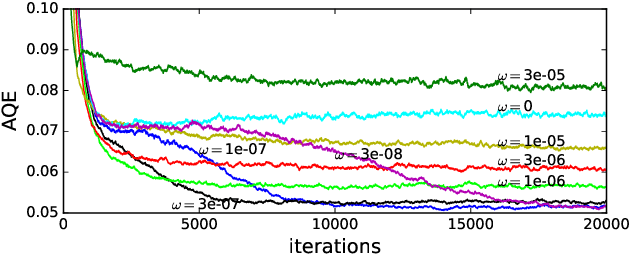

Abstract:This paper presents the self-organized neuromorphic architecture named SOMA. The objective is to study neural-based self-organization in computing systems and to prove the feasibility of a self-organizing hardware structure. Considering that these properties emerge from large scale and fully connected neural maps, we will focus on the definition of a self-organizing hardware architecture based on digital spiking neurons that offer hardware efficiency. From a biological point of view, this corresponds to a combination of the so-called synaptic and structural plasticities. We intend to define computational models able to simultaneously self-organize at both computation and communication levels, and we want these models to be hardware-compliant, fault tolerant and scalable by means of a neuro-cellular structure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge