Andres Sanchez-Perez

Aggregation of predictors for nonstationary sub-linear processes and online adaptive forecasting of time varying autoregressive processes

Nov 17, 2015

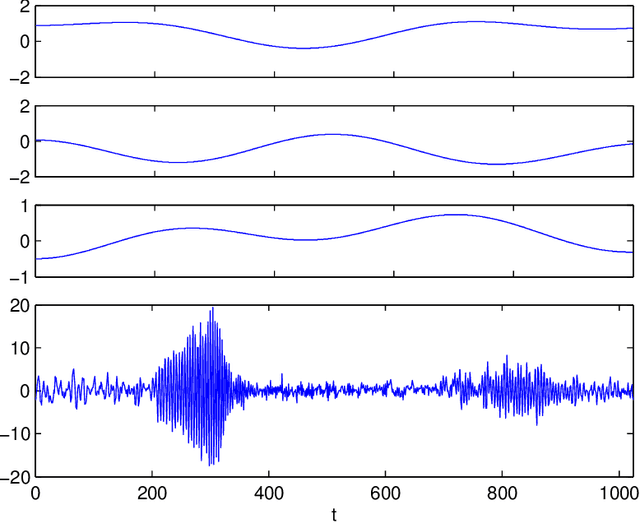

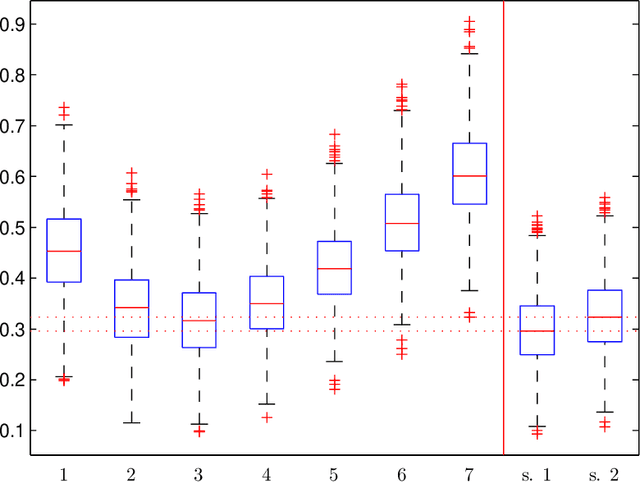

Abstract:In this work, we study the problem of aggregating a finite number of predictors for nonstationary sub-linear processes. We provide oracle inequalities relying essentially on three ingredients: (1) a uniform bound of the $\ell^1$ norm of the time varying sub-linear coefficients, (2) a Lipschitz assumption on the predictors and (3) moment conditions on the noise appearing in the linear representation. Two kinds of aggregations are considered giving rise to different moment conditions on the noise and more or less sharp oracle inequalities. We apply this approach for deriving an adaptive predictor for locally stationary time varying autoregressive (TVAR) processes. It is obtained by aggregating a finite number of well chosen predictors, each of them enjoying an optimal minimax convergence rate under specific smoothness conditions on the TVAR coefficients. We show that the obtained aggregated predictor achieves a minimax rate while adapting to the unknown smoothness. To prove this result, a lower bound is established for the minimax rate of the prediction risk for the TVAR process. Numerical experiments complete this study. An important feature of this approach is that the aggregated predictor can be computed recursively and is thus applicable in an online prediction context.

* Published at http://dx.doi.org/10.1214/15-AOS1345 in the Annals of Statistics (http://www.imstat.org/aos/) by the Institute of Mathematical Statistics (http://www.imstat.org)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge