Andrei Nicolicioiu

Dynamic Regions Graph Neural Networks for Spatio-Temporal Reasoning

Sep 17, 2020

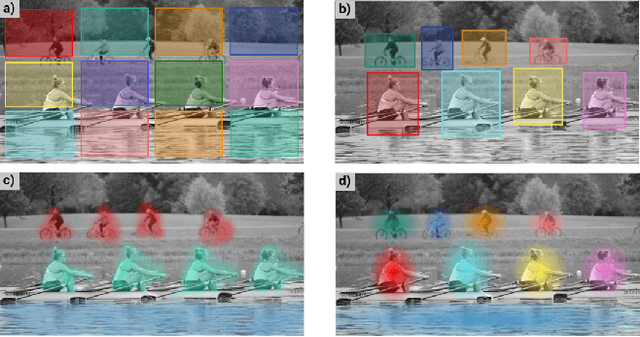

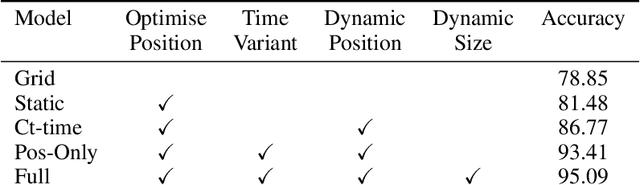

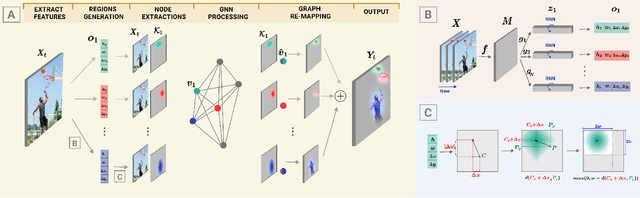

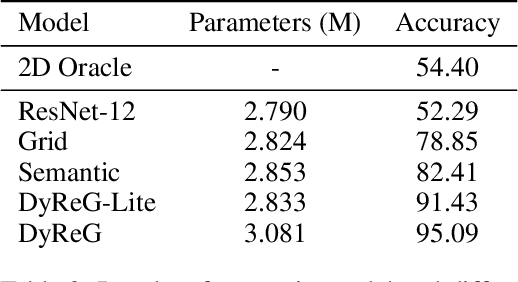

Abstract:Graph Neural Networks are perfectly suited to capture latent interactions occurring in the spatio-temporal domain. But when an explicit structure is not available, as in the visual domain, it is not obvious what atomic elements should be represented as nodes. They should depend on the context and the kinds of relations that we are interested in. We are focusing on modeling relations between instances by proposing a method that takes advantage of the locality assumption to create nodes that are clearly localised in space. Current works are using external object detectors or fixed regions to extract features corresponding to graph nodes, while we propose a module for generating the regions associated with each node dynamically, without explicit object-level supervision. Conditioned on the input, for each node we predict the location and size of a region and use them to pool node features using a differentiable mechanism. Constructing these localised, adaptive nodes makes our model biased towards object-centric representations and we show that it improves the modeling of visual interactions. By relying on a few localized nodes, our method learns to focus on salient regions leading to a more explainable model. Our model achieves superior results on video classification tasks involving instance interactions.

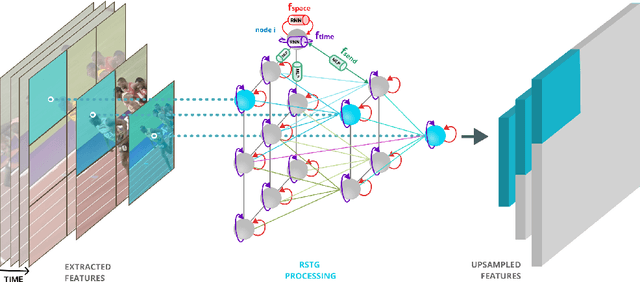

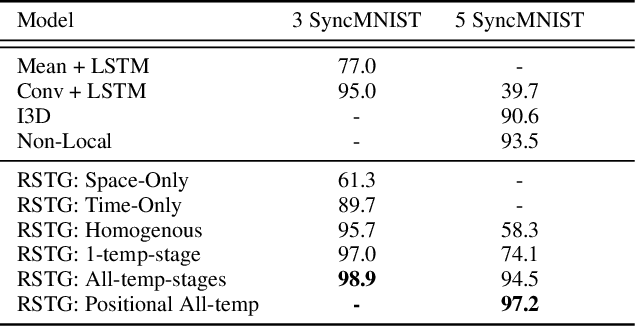

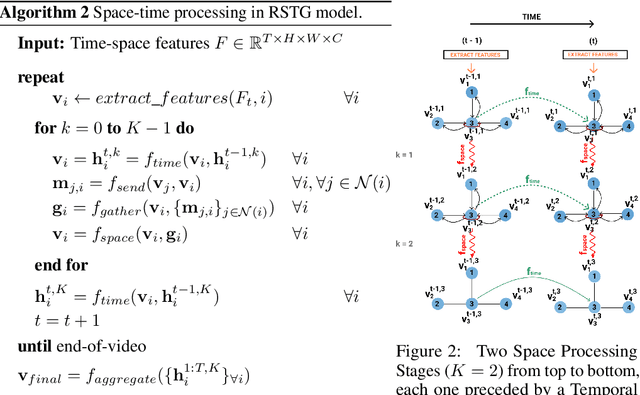

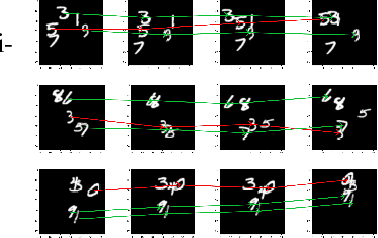

Recurrent Space-time Graphs for Video Understanding

Apr 11, 2019

Abstract:Visual learning in the space-time domain remains a very challenging problem in artificial intelligence. Current computational models for understanding video data are heavily rooted in the classical single-image based paradigm. It is not yet well understood how to integrate visual information from space and time into a single, general model. We propose a neural graph model, recurrent in space and time, suitable for capturing both the appearance and the complex interactions of different entities and objects within the changing world scene. Nodes and links in our graph have dedicated neural networks for processing information. Edges process messages between connected nodes at different locations and scales or between past and present time. Nodes compute over features extracted from local parts in space and time and over messages received from their neighbours and previous memory states. Messages are passed iteratively in order to transmit information globally and establish long range interactions. Our model is general and could learn to recognize a variety of high level spatio-temporal concepts and be applied to different learning tasks. We demonstrate, through extensive experiments, a competitive performance over strong baselines on the tasks of recognizing complex patterns of movement in video.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge