Andrei Apostol

Arrhythmia Classification from 12-Lead ECG Signals Using Convolutional and Transformer-Based Deep Learning Models

Feb 25, 2025Abstract:In Romania, cardiovascular problems are the leading cause of death, accounting for nearly one-third of annual fatalities. The severity of this situation calls for innovative diagnosis method for cardiovascular diseases. This article aims to explore efficient, light-weight and rapid methods for arrhythmia diagnosis, in resource-constrained healthcare settings. Due to the lack of Romanian public medical data, we trained our systems using international public datasets, having in mind that the ECG signals are the same regardless the patients' nationality. Within this purpose, we combined multiple datasets, usually used in the field of arrhythmias classification: PTB-XL electrocardiography dataset , PTB Diagnostic ECG Database, China 12-Lead ECG Challenge Database, Georgia 12-Lead ECG Challenge Database, and St. Petersburg INCART 12-lead Arrhythmia Database. For the input data, we employed ECG signal processing methods, specifically a variant of the Pan-Tompkins algorithm, useful in arrhythmia classification because it provides a robust and efficient method for detecting QRS complexes in ECG signals. Additionally, we used machine learning techniques, widely used for the task of classification, including convolutional neural networks (1D CNNs, 2D CNNs, ResNet) and Vision Transformers (ViTs). The systems were evaluated in terms of accuracy and F1 score. We annalysed our dataset from two perspectives. First, we fed the systems with the ECG signals and the GRU-based 1D CNN model achieved the highest accuracy of 93.4% among all the tested architectures. Secondly, we transformed ECG signals into images and the CNN2D model achieved an accuracy of 92.16%.

FlipOut: Uncovering Redundant Weights via Sign Flipping

Sep 05, 2020

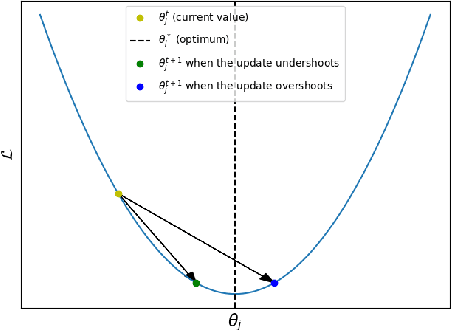

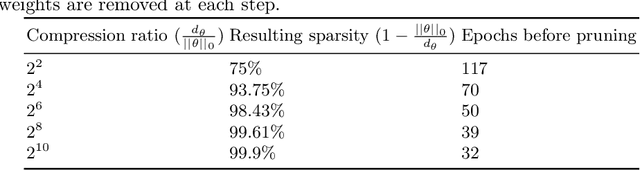

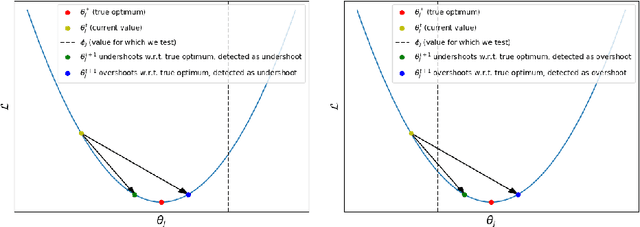

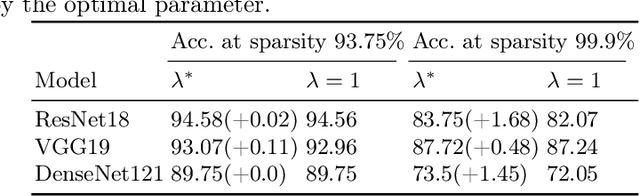

Abstract:Modern neural networks, although achieving state-of-the-art results on many tasks, tend to have a large number of parameters, which increases training time and resource usage. This problem can be alleviated by pruning. Existing methods, however, often require extensive parameter tuning or multiple cycles of pruning and retraining to convergence in order to obtain a favorable accuracy-sparsity trade-off. To address these issues, we propose a novel pruning method which uses the oscillations around $0$ (i.e. sign flips) that a weight has undergone during training in order to determine its saliency. Our method can perform pruning before the network has converged, requires little tuning effort due to having good default values for its hyperparameters, and can directly target the level of sparsity desired by the user. Our experiments, performed on a variety of object classification architectures, show that it is competitive with existing methods and achieves state-of-the-art performance for levels of sparsity of $99.6\%$ and above for most of the architectures tested. For reproducibility, we release our code publicly at https://github.com/AndreiXYZ/flipout.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge