Andrea Ferigo

Self-building Neural Networks

Apr 03, 2023

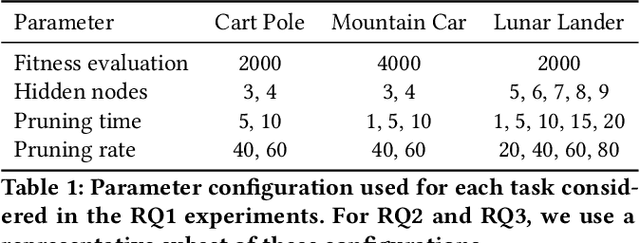

Abstract:During the first part of life, the brain develops while it learns through a process called synaptogenesis. The neurons, growing and interacting with each other, create synapses. However, eventually the brain prunes those synapses. While previous work focused on learning and pruning independently, in this work we propose a biologically plausible model that, thanks to a combination of Hebbian learning and pruning, aims to simulate the synaptogenesis process. In this way, while learning how to solve the task, the agent translates its experience into a particular network structure. Namely, the network structure builds itself during the execution of the task. We call this approach Self-building Neural Network (SBNN). We compare our proposed SBNN with traditional neural networks (NNs) over three classical control tasks from OpenAI. The results show that our model performs generally better than traditional NNs. Moreover, we observe that the performance decay while increasing the pruning rate is smaller in our model than with NNs. Finally, we perform a validation test, testing the models over tasks unseen during the learning phase. In this case, the results show that SBNNs can adapt to new tasks better than the traditional NNs, especially when over $80\%$ of the weights are pruned.

Quality Diversity Evolutionary Learning of Decision Trees

Aug 17, 2022

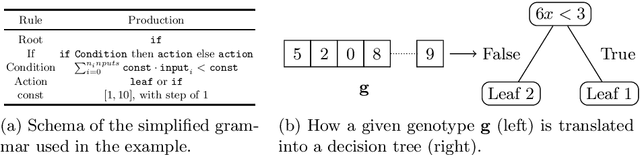

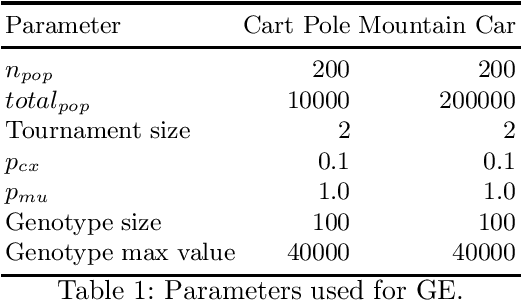

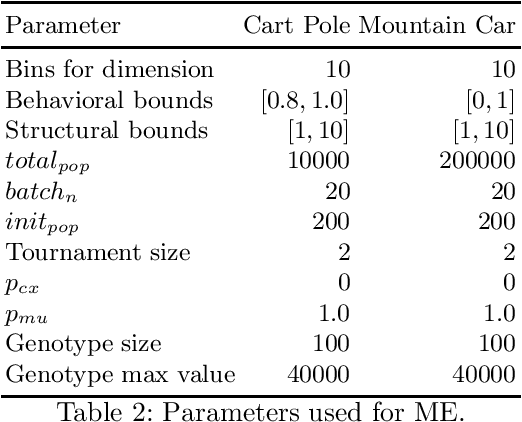

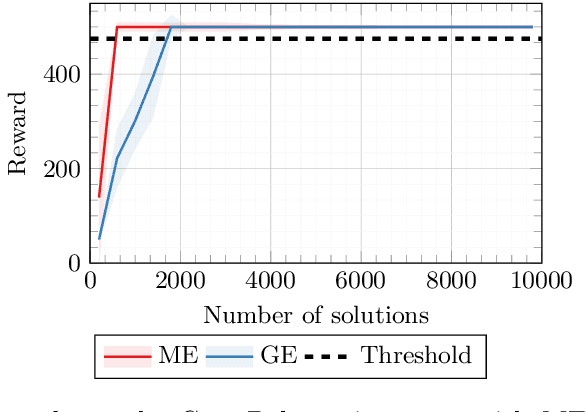

Abstract:Addressing the need for explainable Machine Learning has emerged as one of the most important research directions in modern Artificial Intelligence (AI). While the current dominant paradigm in the field is based on black-box models, typically in the form of (deep) neural networks, these models lack direct interpretability for human users, i.e., their outcomes (and, even more so, their inner working) are opaque and hard to understand. This is hindering the adoption of AI in safety-critical applications, where high interests are at stake. In these applications, explainable by design models, such as decision trees, may be more suitable, as they provide interpretability. Recent works have proposed the hybridization of decision trees and Reinforcement Learning, to combine the advantages of the two approaches. So far, however, these works have focused on the optimization of those hybrid models. Here, we apply MAP-Elites for diversifying hybrid models over a feature space that captures both the model complexity and its behavioral variability. We apply our method on two well-known control problems from the OpenAI Gym library, on which we discuss the "illumination" patterns projected by MAP-Elites, comparing its results against existing similar approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge