Ana Perez Grassi

Unsupervised Domain Adaptation from Synthetic to Real Images for Anchorless Object Detection

Dec 15, 2020

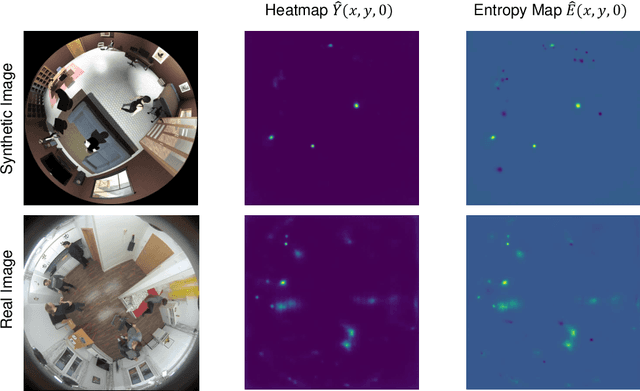

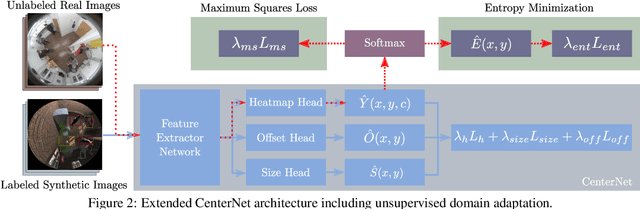

Abstract:Synthetic images are one of the most promising solutions to avoid high costs associated with generating annotated datasets to train supervised convolutional neural networks (CNN). However, to allow networks to generalize knowledge from synthetic to real images, domain adaptation methods are necessary. This paper implements unsupervised domain adaptation (UDA) methods on an anchorless object detector. Given their good performance, anchorless detectors are increasingly attracting attention in the field of object detection. While their results are comparable to the well-established anchor-based methods, anchorless detectors are considerably faster. In our work, we use CenterNet, one of the most recent anchorless architectures, for a domain adaptation problem involving synthetic images. Taking advantage of the architecture of anchorless detectors, we propose to adjust two UDA methods, viz., entropy minimization and maximum squares loss, originally developed for segmentation, to object detection. Our results show that the proposed UDA methods can increase the mAPfrom61 %to69 %with respect to direct transfer on the considered anchorless detector. The code is available: https://github.com/scheckmedia/centernet-uda.

A CNN-based Feature Space for Semi-supervised Incremental Learning in Assisted Living Applications

Nov 11, 2020

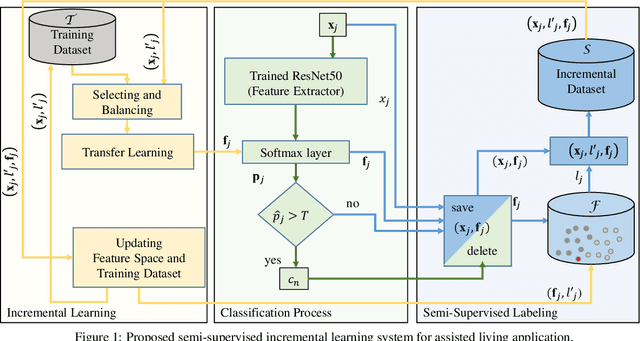

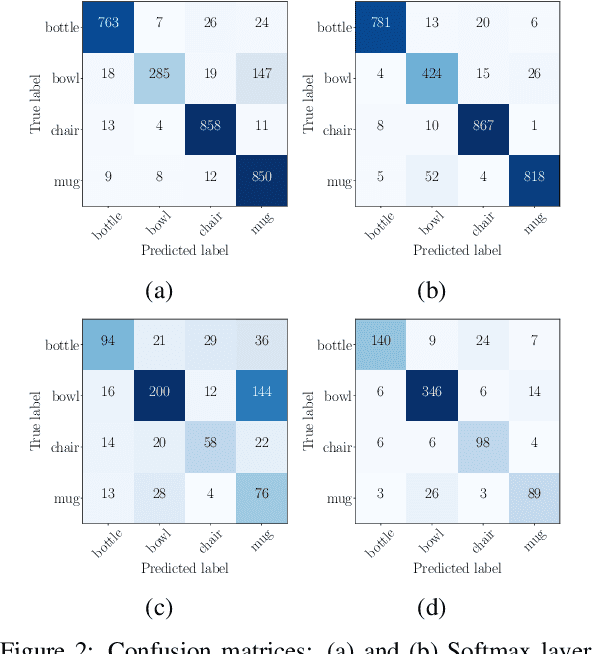

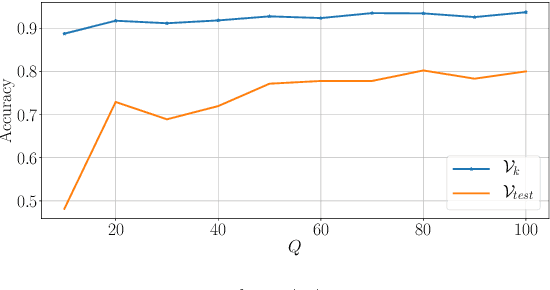

Abstract:A Convolutional Neural Network (CNN) is sometimes confronted with objects of changing appearance ( new instances) that exceed its generalization capability. This requires the CNN to incorporate new knowledge, i.e., to learn incrementally. In this paper, we are concerned with this problem in the context of assisted living. We propose using the feature space that results from the training dataset to automatically label problematic images that could not be properly recognized by the CNN. The idea is to exploit the extra information in the feature space for a semi-supervised labeling and to employ problematic images to improve the CNN's classification model. Among other benefits, the resulting semi-supervised incremental learning process allows improving the classification accuracy of new instances by 40% as illustrated by extensive experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge