Amirhossein Rajabi

How Well Does the Metropolis Algorithm Cope With Local Optima?

Apr 21, 2023

Abstract:The Metropolis algorithm (MA) is a classic stochastic local search heuristic. It avoids getting stuck in local optima by occasionally accepting inferior solutions. To better and in a rigorous manner understand this ability, we conduct a mathematical runtime analysis of the MA on the CLIFF benchmark. Apart from one local optimum, cliff functions are monotonically increasing towards the global optimum. Consequently, to optimize a cliff function, the MA only once needs to accept an inferior solution. Despite seemingly being an ideal benchmark for the MA to profit from its main working principle, our mathematical runtime analysis shows that this hope does not come true. Even with the optimal temperature (the only parameter of the MA), the MA optimizes most cliff functions less efficiently than simple elitist evolutionary algorithms (EAs), which can only leave the local optimum by generating a superior solution possibly far away. This result suggests that our understanding of why the MA is often very successful in practice is not yet complete. Our work also suggests to equip the MA with global mutation operators, an idea supported by our preliminary experiments.

Simulated Annealing is a Polynomial-Time Approximation Scheme for the Minimum Spanning Tree Problem

Apr 05, 2022Abstract:We prove that Simulated Annealing with an appropriate cooling schedule computes arbitrarily tight constant-factor approximations to the minimum spanning tree problem in polynomial time. This result was conjectured by Wegener (2005). More precisely, denoting by $n, m, w_{\max}$, and $w_{\min}$ the number of vertices and edges as well as the maximum and minimum edge weight of the MST instance, we prove that simulated annealing with initial temperature $T_0 \ge w_{\max}$ and multiplicative cooling schedule with factor $1-1/\ell$, where $\ell = \omega (mn\ln(m))$, with probability at least $1-1/m$ computes in time $O(\ell (\ln\ln (\ell) + \ln(T_0/w_{\min}) ))$ a spanning tree with weight at most $1+\kappa$ times the optimum weight, where $1+\kappa = \frac{(1+o(1))\ln(\ell m)}{\ln(\ell) -\ln (mn\ln (m))}$. Consequently, for any $\epsilon>0$, we can choose $\ell$ in such a way that a $(1+\epsilon)$-approximation is found in time $O((mn\ln(n))^{1+1/\epsilon+o(1)}(\ln\ln n + \ln(T_0/w_{\min})))$ with probability at least $1-1/m$. In the special case of so-called $(1+\epsilon)$-separated weights, this algorithm computes an optimal solution (again in time $O( (mn\ln(n))^{1+1/\epsilon+o(1)}(\ln\ln n + \ln(T_0/w_{\min})))$), which is a significant speed-up over Wegener's runtime guarantee of $O(m^{8 + 8/\epsilon})$.

Stagnation Detection meets Fast Mutation

Jan 28, 2022

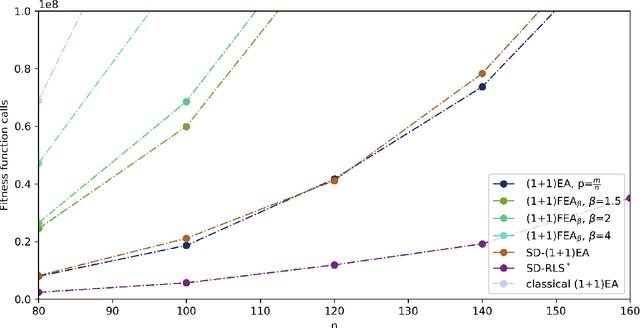

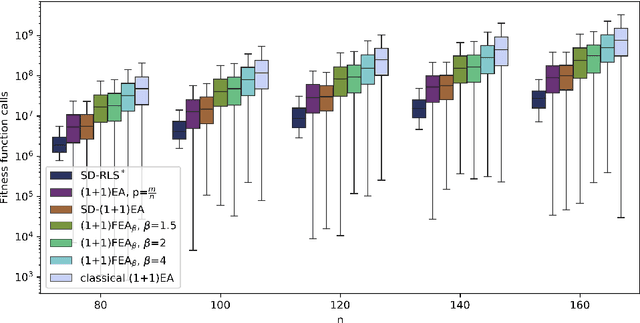

Abstract:Two mechanisms have recently been proposed that can significantly speed up finding distant improving solutions via mutation, namely using a random mutation rate drawn from a heavy-tailed distribution ("fast mutation", Doerr et al. (2017)) and increasing the mutation strength based on stagnation detection (Rajabi and Witt (2020)). Whereas the latter can obtain the asymptotically best probability of finding a single desired solution in a given distance, the former is more robust and performs much better when many improving solutions in some distance exist. In this work, we propose a mutation strategy that combines ideas of both mechanisms. We show that it can also obtain the best possible probability of finding a single distant solution. However, when several improving solutions exist, it can outperform both the stagnation-detection approach and fast mutation. The new operator is more than an interleaving of the two previous mechanisms and it also outperforms any such interleaving.

Stagnation Detection in Highly Multimodal Fitness Landscapes

Apr 22, 2021

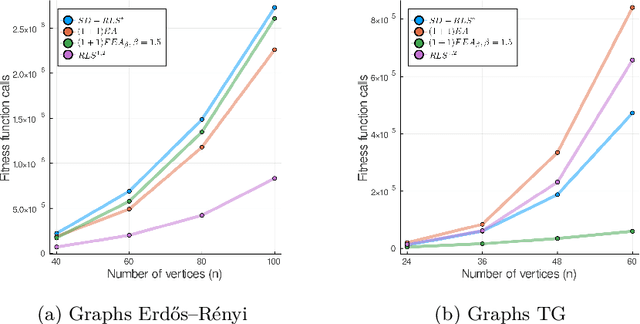

Abstract:Stagnation detection has been proposed as a mechanism for randomized search heuristics to escape from local optima by automatically increasing the size of the neighborhood to find the so-called gap size, i.e., the distance to the next improvement. Its usefulness has mostly been considered in simple multimodal landscapes with few local optima that could be crossed one after another. In multimodal landscapes with a more complex location of optima of similar gap size, stagnation detection suffers from the fact that the neighborhood size is frequently reset to $1$ without using gap sizes that were promising in the past. In this paper, we investigate a new mechanism called radius memory which can be added to stagnation detection to control the search radius more carefully by giving preference to values that were successful in the past. We implement this idea in an algorithm called SD-RLS$^{\text{m}}$ and show compared to previous variants of stagnation detection that it yields speed-ups for linear functions under uniform constraints and the minimum spanning tree problem. Moreover, its running time does not significantly deteriorate on unimodal functions and a generalization of the Jump benchmark. Finally, we present experimental results carried out to study SD-RLS$^{\text{m}}$ and compare it with other algorithms.

Stagnation Detection with Randomized Local Search

Feb 08, 2021

Abstract:Recently a mechanism called stagnation detection was proposed that automatically adjusts the mutation rate of evolutionary algorithms when they encounter local optima. The so-called $SD-(1+1)EA$ introduced by Rajabi and Witt (GECCO 2020) adds stagnation detection to the classical $(1+1)EA$ with standard bit mutation, which flips each bit independently with some mutation rate, and raises the mutation rate when the algorithm is likely to have encountered local optima. In this paper, we investigate stagnation detection in the context of the $k$-bit flip operator of randomized local search that flips $k$ bits chosen uniformly at random and let stagnation detection adjust the parameter $k$. We obtain improved runtime results compared to the $SD-(1+1)EA$ amounting to a speed-up of up to $e=2.71\dots$ Moreover, we propose additional schemes that prevent infinite optimization times even if the algorithm misses a working choice of $k$ due to unlucky events. Finally, we present an example where standard bit mutation still outperforms the local $k$-bit flip with stagnation detection.

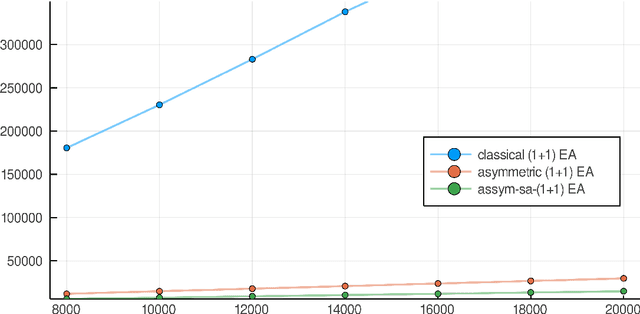

Evolutionary Algorithms with Self-adjusting Asymmetric Mutation

Jun 16, 2020

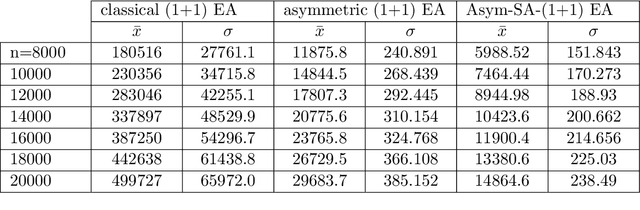

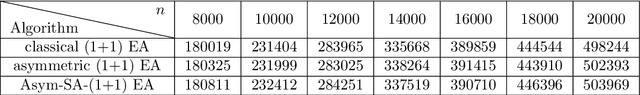

Abstract:Evolutionary Algorithms (EAs) and other randomized search heuristics are often considered as unbiased algorithms that are invariant with respect to different transformations of the underlying search space. However, if a certain amount of domain knowledge is available the use of biased search operators in EAs becomes viable. We consider a simple (1+1) EA for binary search spaces and analyze an asymmetric mutation operator that can treat zero- and one-bits differently. This operator extends previous work by Jansen and Sudholt (ECJ 18(1), 2010) by allowing the operator asymmetry to vary according to the success rate of the algorithm. Using a self-adjusting scheme that learns an appropriate degree of asymmetry, we show improved runtime results on the class of functions OneMax$_a$ describing the number of matching bits with a fixed target $a\in\{0,1\}^n$.

Self-Adjusting Evolutionary Algorithms for Multimodal Optimization

Apr 07, 2020

Abstract:Recent theoretical research has shown that self-adjusting and self-adaptive mechanisms can provably outperform static settings in evolutionary algorithms for binary search spaces. However, the vast majority of these studies focuses on unimodal functions which do not require the algorithm to flip several bits simultaneously to make progress. In fact, existing self-adjusting algorithms are not designed to detect local optima and do not have any obvious benefit to cross large Hamming gaps. We suggest a mechanism called stagnation detection that can be added as a module to existing evolutionary algorithms (both with and without prior self-adjusting algorithms). Added to a simple (1+1) EA, we prove an expected runtime on the well-known Jump benchmark that corresponds to an asymptotically optimal parameter setting and outperforms other mechanisms for multimodal optimization like heavy-tailed mutation. We also investigate the module in the context of a self-adjusting (1+$\lambda$) EA and show that it combines the previous benefits of this algorithm on unimodal problems with more efficient multimodal optimization. To explore the limitations of the approach, we additionally present an example where both self-adjusting mechanisms, including stagnation detection, do not help to find a beneficial setting of the mutation rate. Finally, we investigate our module for stagnation detection experimentally.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge