Amin Abken

Context Query Simulation for Smart Carparking Scenarios in the Melbourne CDB

Feb 13, 2023

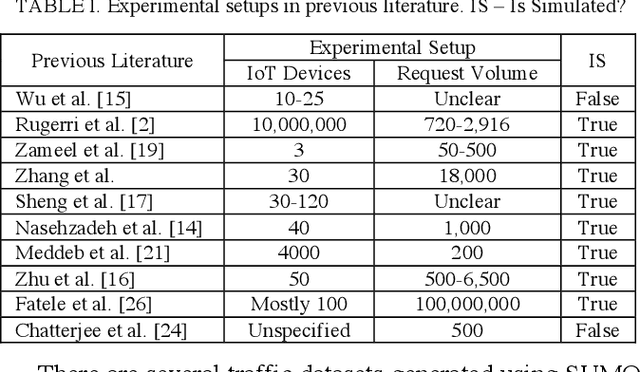

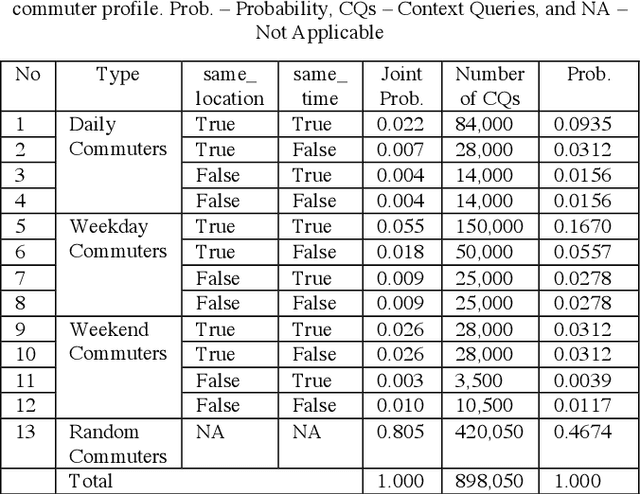

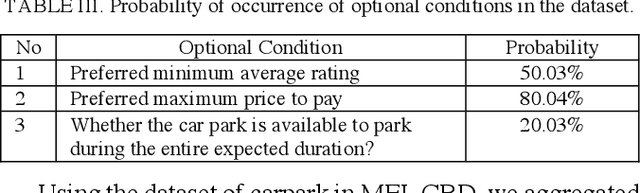

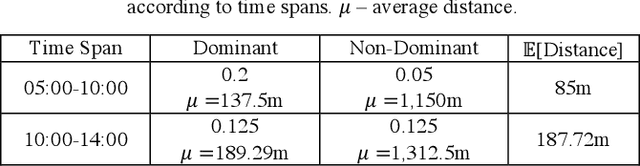

Abstract:The rapid growth in Internet of Things (IoT) has ushered in the way for better context-awareness enabling more smarter applications. Although for the growth in the number of IoT devices, Context Management Platforms (CMPs) that integrate different domains of IoT to produce context information lacks scalability to cater to a high volume of context queries. Research in scalability and adaptation in CMPs are of significant importance due to this reason. However, there is limited methods to benchmarks and validate research in this area due to the lack of sizable sets of context queries that could simulate real-world situations, scenarios, and scenes. Commercially collected context query logs are not publicly accessible and deploying IoT devices, and context consumers in the real-world at scale is expensive and consumes a significant effort and time. Therefore, there is a need to develop a method to reliably generate and simulate context query loads that resembles real-world scenarios to test CMPs for scale. In this paper, we propose a context query simulator for the context-aware smart car parking scenario in Melbourne Central Business District in Australia. We present the process of generating context queries using multiple real-world datasets and publicly accessible reports, followed by the context query execution process. The context query generator matches the popularity of places with the different profiles of commuters, preferences, and traffic variations to produce a dataset of context query templates containing 898,050 records. The simulator is executable over a seven-day profile which far exceeds the simulation time of any IoT system simulator. The context query generation process is also generic and context query language independent.

Reinforcement Learning Based Approaches to Adaptive Context Caching in Distributed Context Management Systems

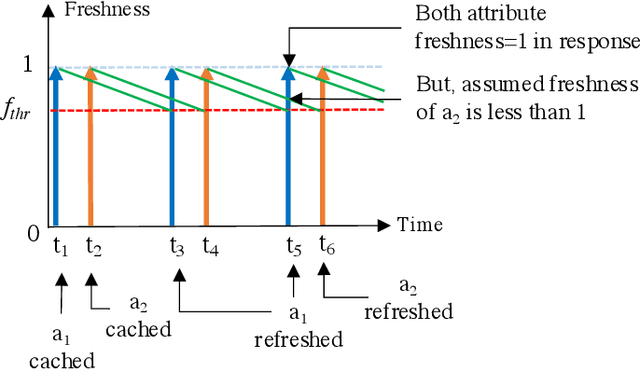

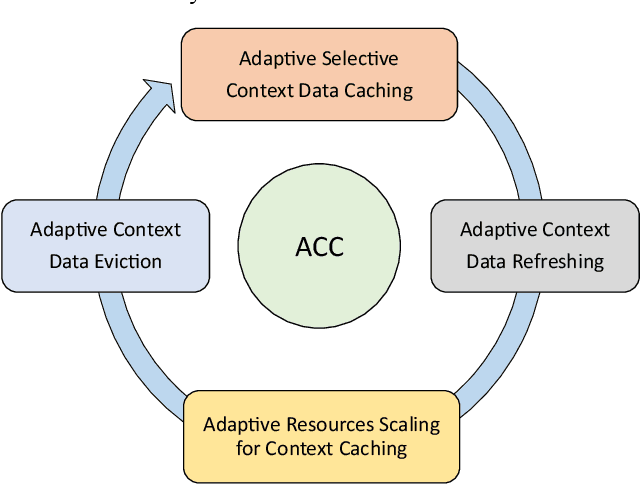

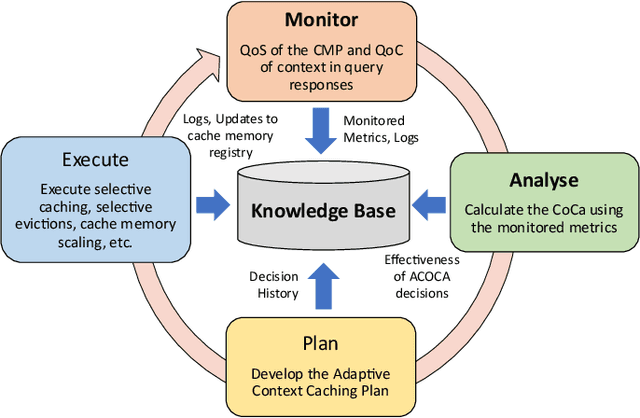

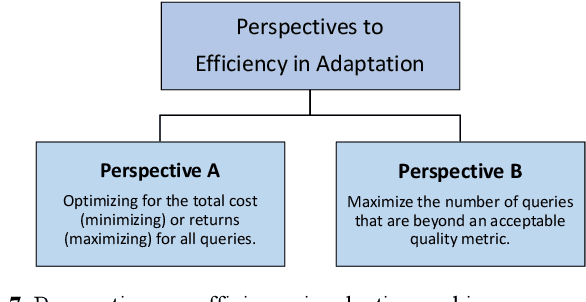

Dec 22, 2022Abstract:Performance metrics-driven context caching has a profound impact on throughput and response time in distributed context management systems for real-time context queries. This paper proposes a reinforcement learning based approach to adaptively cache context with the objective of minimizing the cost incurred by context management systems in responding to context queries. Our novel algorithms enable context queries and sub-queries to reuse and repurpose cached context in an efficient manner. This approach is distinctive to traditional data caching approaches by three main features. First, we make selective context cache admissions using no prior knowledge of the context, or the context query load. Secondly, we develop and incorporate innovative heuristic models to calculate expected performance of caching an item when making the decisions. Thirdly, our strategy defines a time-aware continuous cache action space. We present two reinforcement learning agents, a value function estimating actor-critic agent and a policy search agent using deep deterministic policy gradient method. The paper also proposes adaptive policies such as eviction and cache memory scaling to complement our objective. Our method is evaluated using a synthetically generated load of context sub-queries and a synthetic data set inspired from real world data and query samples. We further investigate optimal adaptive caching configurations under different settings. This paper presents, compares, and discusses our findings that the proposed selective caching methods reach short- and long-term cost- and performance-efficiency. The paper demonstrates that the proposed methods outperform other modes of context management such as redirector mode, and database mode, and cache all policy by up to 60% in cost efficiency.

From Traditional Adaptive Data Caching to Adaptive Context Caching: A Survey

Nov 21, 2022

Abstract:Context data is in demand more than ever with the rapid increase in the development of many context-aware Internet of Things applications. Research in context and context-awareness is being conducted to broaden its applicability in light of many practical and technical challenges. One of the challenges is improving performance when responding to large number of context queries. Context Management Platforms that infer and deliver context to applications measure this problem using Quality of Service (QoS) parameters. Although caching is a proven way to improve QoS, transiency of context and features such as variability, heterogeneity of context queries pose an additional real-time cost management problem. This paper presents a critical survey of state-of-the-art in adaptive data caching with the objective of developing a body of knowledge in cost- and performance-efficient adaptive caching strategies. We comprehensively survey a large number of research publications and evaluate, compare, and contrast different techniques, policies, approaches, and schemes in adaptive caching. Our critical analysis is motivated by the focus on adaptively caching context as a core research problem. A formal definition for adaptive context caching is then proposed, followed by identified features and requirements of a well-designed, objective optimal adaptive context caching strategy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge