Alexander Distergoft

Exploring Adversarial Examples: Patterns of One-Pixel Attacks

Jun 25, 2018

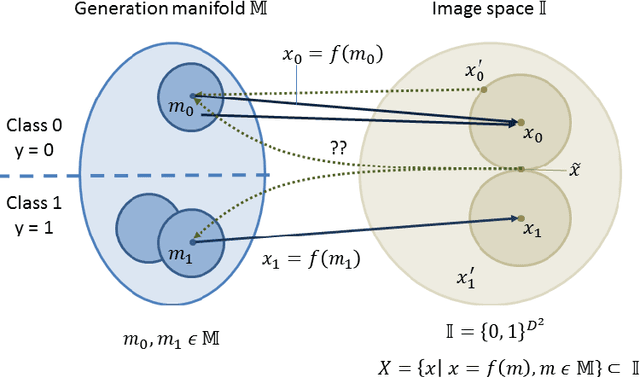

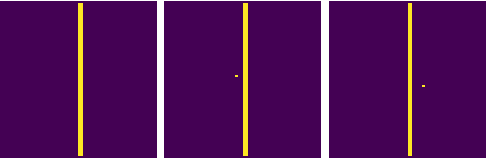

Abstract:Failure cases of black-box deep learning, e.g. adversarial examples, might have severe consequences in healthcare. Yet such failures are mostly studied in the context of real-world images with calibrated attacks. To demystify the adversarial examples, rigorous studies need to be designed. Unfortunately, complexity of the medical images hinders such study design directly from the medical images. We hypothesize that adversarial examples might result from the incorrect mapping of image space to the low dimensional generation manifold by deep networks. To test the hypothesis, we simplify a complex medical problem namely pose estimation of surgical tools into its barest form. An analytical decision boundary and exhaustive search of the one-pixel attack across multiple image dimensions let us localize the regions of frequent successful one-pixel attacks at the image space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge