Alex Boulanger

Learning Multilingual Word Representations using a Bag-of-Words Autoencoder

Jan 08, 2014

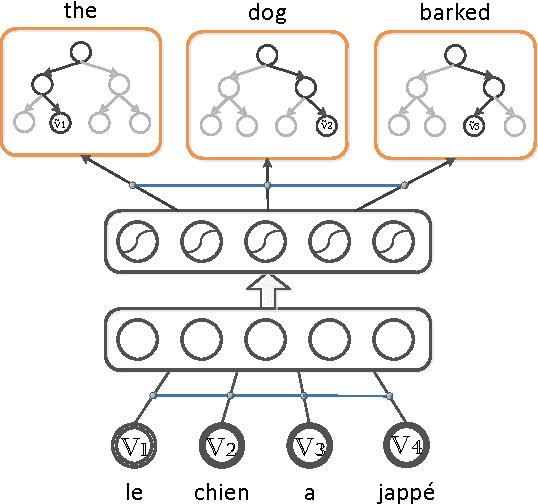

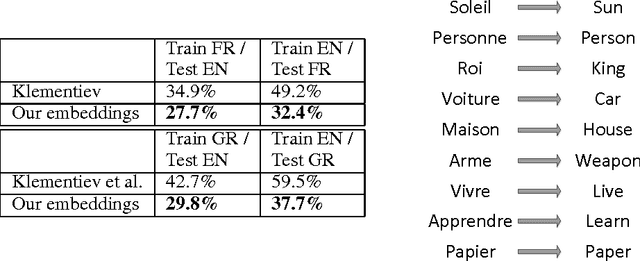

Abstract:Recent work on learning multilingual word representations usually relies on the use of word-level alignements (e.g. infered with the help of GIZA++) between translated sentences, in order to align the word embeddings in different languages. In this workshop paper, we investigate an autoencoder model for learning multilingual word representations that does without such word-level alignements. The autoencoder is trained to reconstruct the bag-of-word representation of given sentence from an encoded representation extracted from its translation. We evaluate our approach on a multilingual document classification task, where labeled data is available only for one language (e.g. English) while classification must be performed in a different language (e.g. French). In our experiments, we observe that our method compares favorably with a previously proposed method that exploits word-level alignments to learn word representations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge