Albert Murienne

A Novel Augmented Reality Ultrasound Framework Using an RGB-D Camera and a 3D-printed Marker

May 09, 2022

Abstract:Purpose. Ability to locate and track ultrasound images in the 3D operating space is of great benefit for multiple clinical applications. This is often accomplished by tracking the probe using a precise but expensive optical or electromagnetic tracking system. Our goal is to develop a simple and low cost augmented reality echography framework using a standard RGB-D Camera. Methods. A prototype system consisting of an Occipital Structure Core RGB-D camera, a specifically-designed 3D marker, and a fast point cloud registration algorithm FaVoR was developed and evaluated on an Ultrasonix ultrasound system. The probe was calibrated on a 3D-printed N-wire phantom using the software PLUS toolkit. The proposed calibration method is simplified, requiring no additional markers or sensors attached to the phantom. Also, a visualization software based on OpenGL was developed for the augmented reality application. Results. The calibrated probe was used to augment a real-world video in a simulated needle insertion scenario. The ultrasound images were rendered on the video, and visually-coherent results were observed. We evaluated the end-to-end accuracy of our AR US framework on localizing a cube of 5 cm size. From our two experiments, the target pose localization error ranges from 5.6 to 5.9 mm and from -3.9 to 4.2 degrees. Conclusion. We believe that with the potential democratization of RGB-D cameras integrated in mobile devices and AR glasses in the future, our prototype solution may facilitate the use of 3D freehand ultrasound in clinical routine. Future work should include a more rigorous and thorough evaluation, by comparing the calibration accuracy with those obtained by commercial tracking solutions in both simulated and real medical scenarios.

YOLOff: You Only Learn Offsets for robust 6DoF object pose estimation

Feb 25, 2020

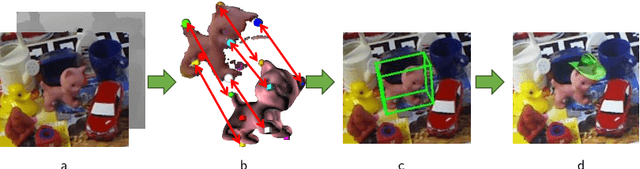

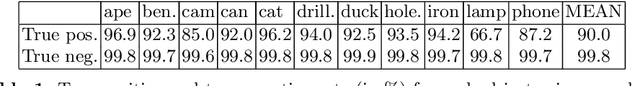

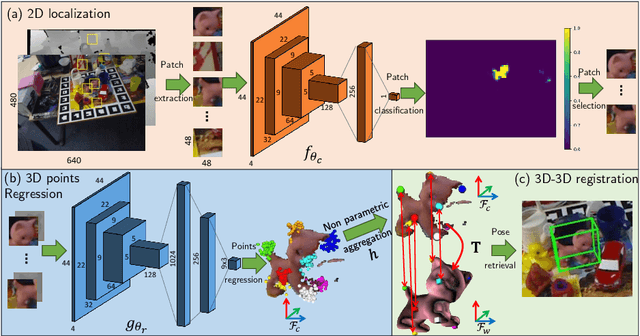

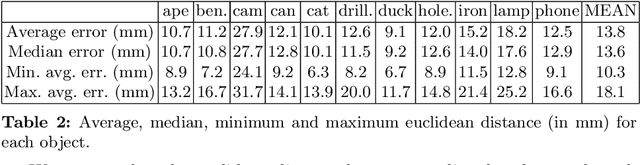

Abstract:Estimating the 3D translation and orientation of an object is a challenging task that can be considered within augmented reality or robotic applications. In this paper, we propose a novel approach to perform 6 DoF object pose estimation from a single RGB-D image in cluttered scenes. We adopt an hybrid pipeline in two stages: data-driven and geometric respectively. The first data-driven step consists of a classification CNN to estimate the object 2D location in the image from local patches, followed by a regression CNN trained to predict the 3D location of a set of keypoints in the camera coordinate system. We robustly perform local voting to recover the location of each keypoint in the camera coordinate system. To extract the pose information, the geometric step consists in aligning the 3D points in the camera coordinate system with the corresponding 3D points in world coordinate system by minimizing a registration error, thus computing the pose. Our experiments on the standard dataset LineMod show that our approach more robust and accurate than state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge