Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Aditya Rajguru

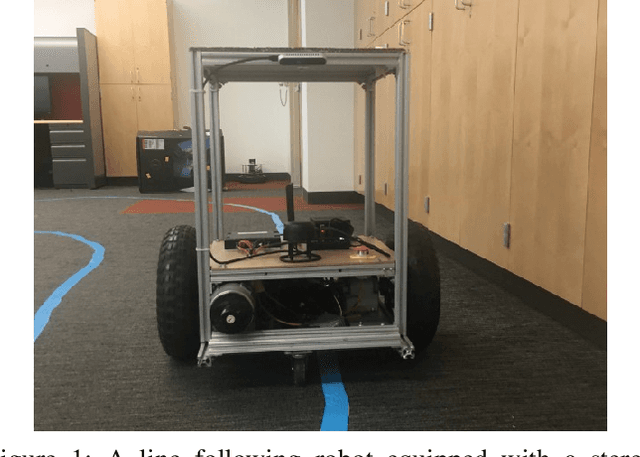

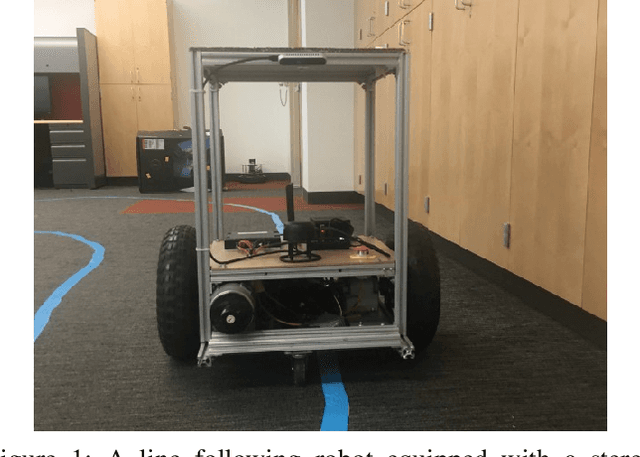

Camera-Based Adaptive Trajectory Guidance via Neural Networks

Jan 09, 2020Figures and Tables:

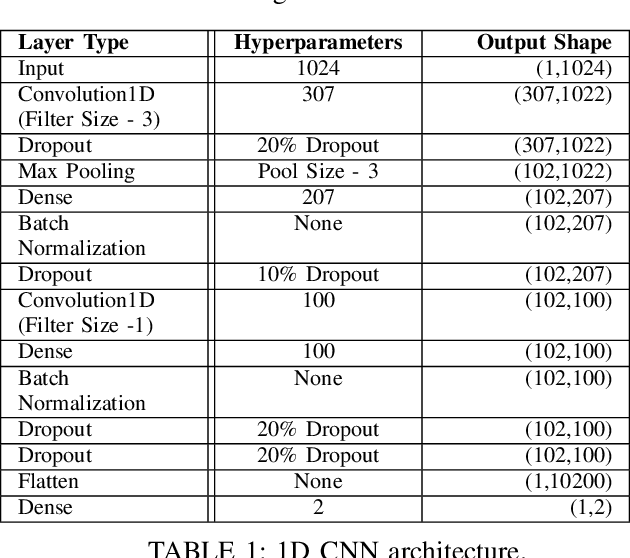

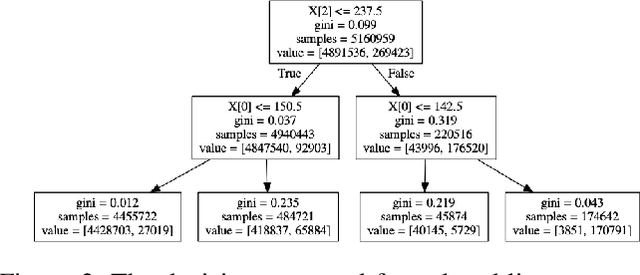

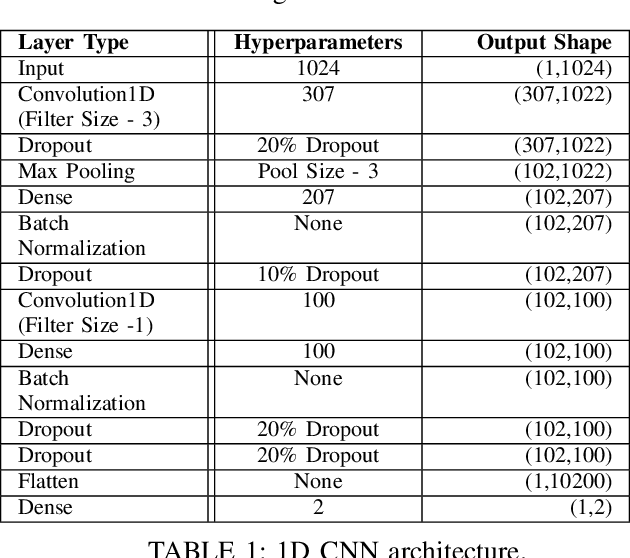

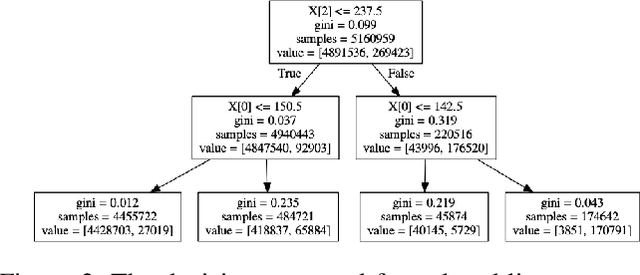

Abstract:In this paper, we introduce a novel method to capture visual trajectories for navigating an indoor robot in dynamic settings using streaming image data. First, an image processing pipeline is proposed to accurately segment trajectories from noisy backgrounds. Next, the captured trajectories are used to design, train, and compare two neural network architectures for predicting acceleration and steering commands for a line following robot over a continuous space in real time. Lastly, experimental results demonstrate the performance of the neural networks versus human teleoperation of the robot and the viability of the system in environments with occlusions and/or low-light conditions.

* To be published in the 2020 6th International Conference on

Mechatronics and Robotics Engineering

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge