Aditya Malte

NVIDIA Nemotron Nano 2: An Accurate and Efficient Hybrid Mamba-Transformer Reasoning Model

Aug 21, 2025

Abstract:We introduce Nemotron-Nano-9B-v2, a hybrid Mamba-Transformer language model designed to increase throughput for reasoning workloads while achieving state-of-the-art accuracy compared to similarly-sized models. Nemotron-Nano-9B-v2 builds on the Nemotron-H architecture, in which the majority of the self-attention layers in the common Transformer architecture are replaced with Mamba-2 layers, to achieve improved inference speed when generating the long thinking traces needed for reasoning. We create Nemotron-Nano-9B-v2 by first pre-training a 12-billion-parameter model (Nemotron-Nano-12B-v2-Base) on 20 trillion tokens using an FP8 training recipe. After aligning Nemotron-Nano-12B-v2-Base, we employ the Minitron strategy to compress and distill the model with the goal of enabling inference on up to 128k tokens on a single NVIDIA A10G GPU (22GiB of memory, bfloat16 precision). Compared to existing similarly-sized models (e.g., Qwen3-8B), we show that Nemotron-Nano-9B-v2 achieves on-par or better accuracy on reasoning benchmarks while achieving up to 6x higher inference throughput in reasoning settings like 8k input and 16k output tokens. We are releasing Nemotron-Nano-9B-v2, Nemotron-Nano12B-v2-Base, and Nemotron-Nano-9B-v2-Base checkpoints along with the majority of our pre- and post-training datasets on Hugging Face.

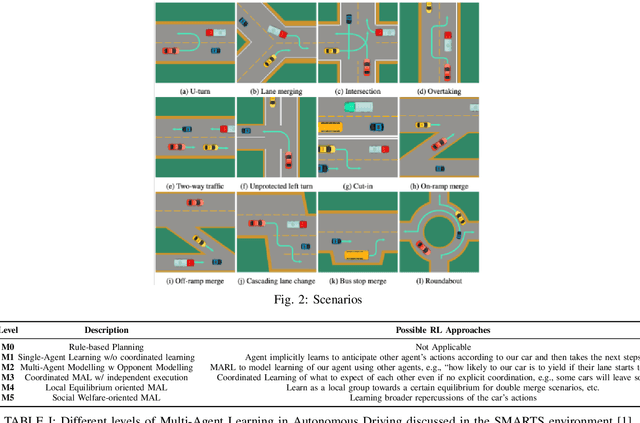

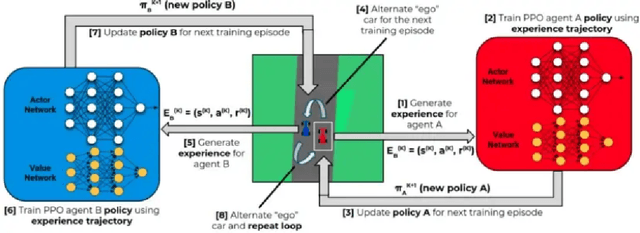

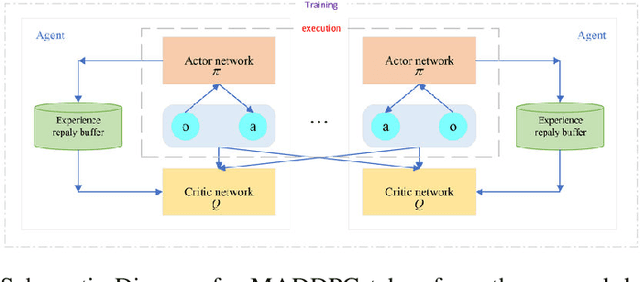

On Multi-Agent Deep Deterministic Policy Gradients and their Explainability for SMARTS Environment

Jan 20, 2023

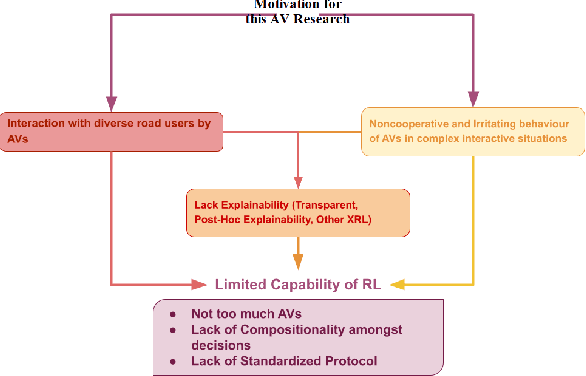

Abstract:Multi-Agent RL or MARL is one of the complex problems in Autonomous Driving literature that hampers the release of fully-autonomous vehicles today. Several simulators have been in iteration after their inception to mitigate the problem of complex scenarios with multiple agents in Autonomous Driving. One such simulator--SMARTS, discusses the importance of cooperative multi-agent learning. For this problem, we discuss two approaches--MAPPO and MADDPG, which are based on-policy and off-policy RL approaches. We compare our results with the state-of-the-art results for this challenge and discuss the potential areas of improvement while discussing the explainability of these approaches in conjunction with waypoints in the SMARTS environment.

Evolution of transfer learning in natural language processing

Oct 16, 2019

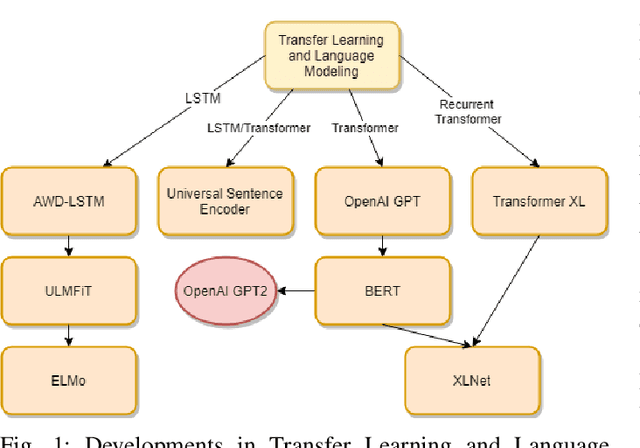

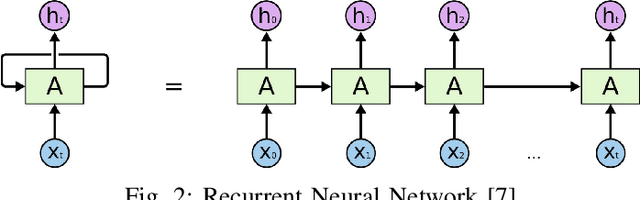

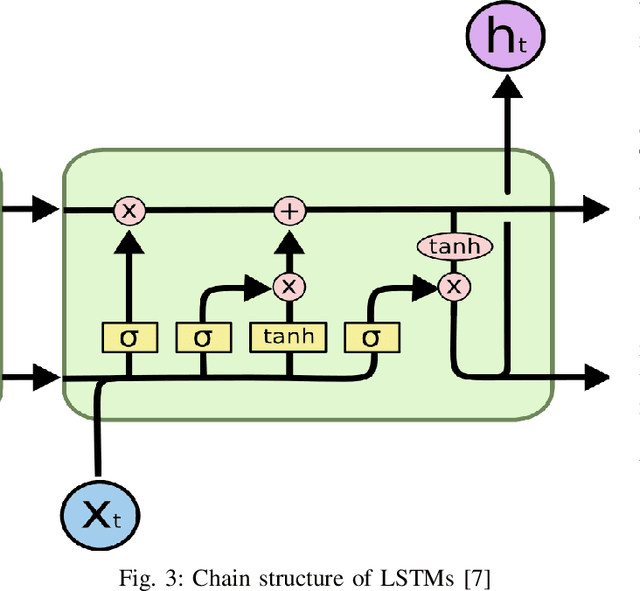

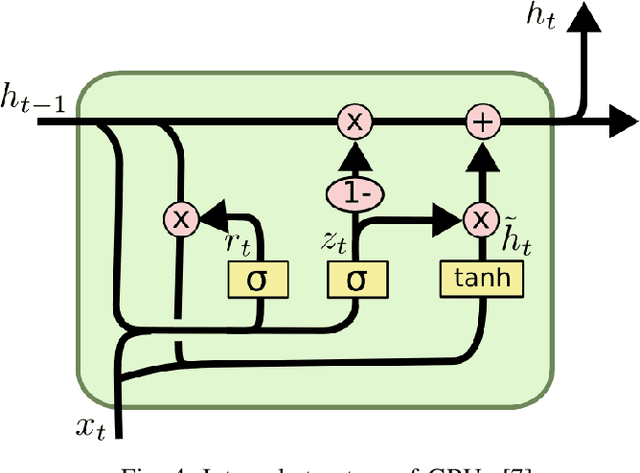

Abstract:In this paper, we present a study of the recent advancements which have helped bring Transfer Learning to NLP through the use of semi-supervised training. We discuss cutting-edge methods and architectures such as BERT, GPT, ELMo, ULMFit among others. Classically, tasks in natural language processing have been performed through rule-based and statistical methodologies. However, owing to the vast nature of natural languages these methods do not generalise well and failed to learn the nuances of language. Thus machine learning algorithms such as Naive Bayes and decision trees coupled with traditional models such as Bag-of-Words and N-grams were used to usurp this problem. Eventually, with the advent of advanced recurrent neural network architectures such as the LSTM, we were able to achieve state-of-the-art performance in several natural language processing tasks such as text classification and machine translation. We talk about how Transfer Learning has brought about the well-known ImageNet moment for NLP. Several advanced architectures such as the Transformer and its variants have allowed practitioners to leverage knowledge gained from unrelated task to drastically fasten convergence and provide better performance on the target task. This survey represents an effort at providing a succinct yet complete understanding of the recent advances in natural language processing using deep learning in with a special focus on detailing transfer learning and its potential advantages.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge