Aditya Kuppa

Enhancing Illicit Activity Detection using XAI: A Multimodal Graph-LLM Framework

Oct 20, 2023

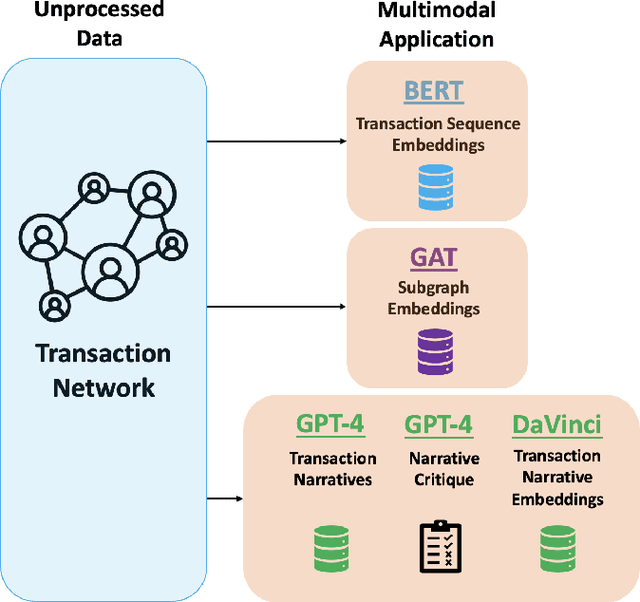

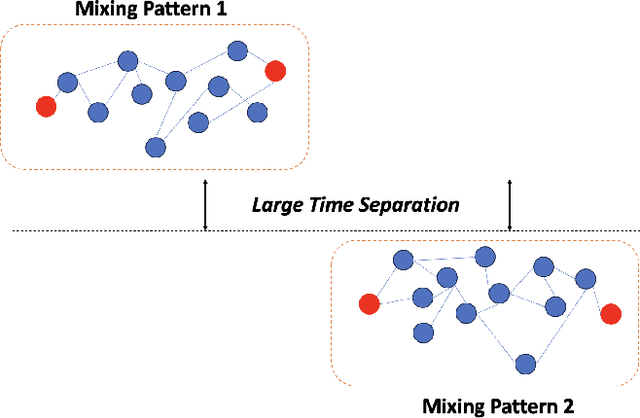

Abstract:Financial cybercrime prevention is an increasing issue with many organisations and governments. As deep learning models have progressed to identify illicit activity on various financial and social networks, the explainability behind the model decisions has been lacklustre with the investigative analyst at the heart of any deep learning platform. In our paper, we present a state-of-the-art, novel multimodal proactive approach to addressing XAI in financial cybercrime detection. We leverage a triad of deep learning models designed to distill essential representations from transaction sequencing, subgraph connectivity, and narrative generation to significantly streamline the analyst's investigative process. Our narrative generation proposal leverages LLM to ingest transaction details and output contextual narrative for an analyst to understand a transaction and its metadata much further.

Finding Rats in Cats: Detecting Stealthy Attacks using Group Anomaly Detection

May 20, 2019

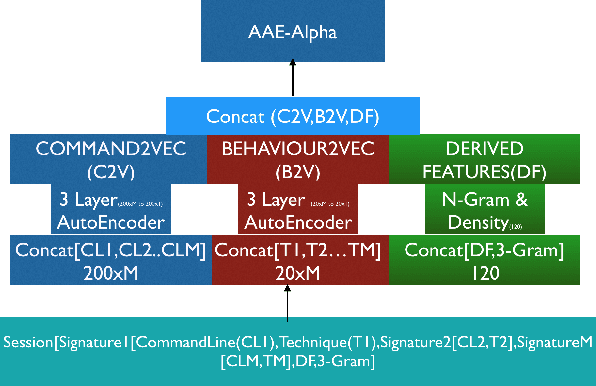

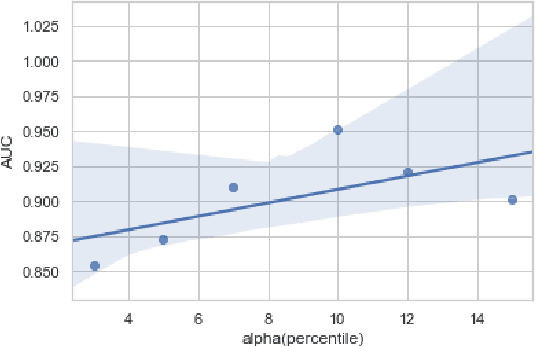

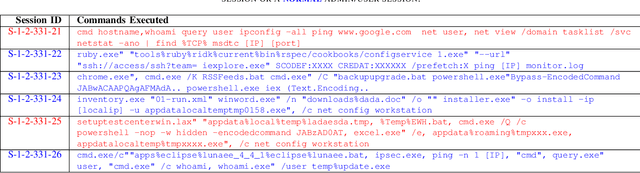

Abstract:Advanced attack campaigns span across multiple stages and stay stealthy for long time periods. There is a growing trend of attackers using off-the-shelf tools and pre-installed system applications (such as \emph{powershell} and \emph{wmic}) to evade the detection because the same tools are also used by system administrators and security analysts for legitimate purposes for their routine tasks. To start investigations, event logs can be collected from operational systems; however, these logs are generic enough and it often becomes impossible to attribute a potential attack to a specific attack group. Recent approaches in the literature have used anomaly detection techniques, which aim at distinguishing between malicious and normal behavior of computers or network systems. Unfortunately, anomaly detection systems based on point anomalies are too rigid in a sense that they could miss the malicious activity and classify the attack, not an outlier. Therefore, there is a research challenge to make better detection of malicious activities. To address this challenge, in this paper, we leverage Group Anomaly Detection (GAD), which detects anomalous collections of individual data points. Our approach is to build a neural network model utilizing Adversarial Autoencoder (AAE-$\alpha$) in order to detect the activity of an attacker who leverages off-the-shelf tools and system applications. In addition, we also build \textit{Behavior2Vec} and \textit{Command2Vec} sentence embedding deep learning models specific for feature extraction tasks. We conduct extensive experiments to evaluate our models on real-world datasets collected for a period of two months. The empirical results demonstrate that our approach is effective and robust in discovering targeted attacks, pen-tests, and attack campaigns leveraging custom tools.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge