Adesh Bansode

A Comparative study of Hyper-Parameter Optimization Tools

Jan 17, 2022

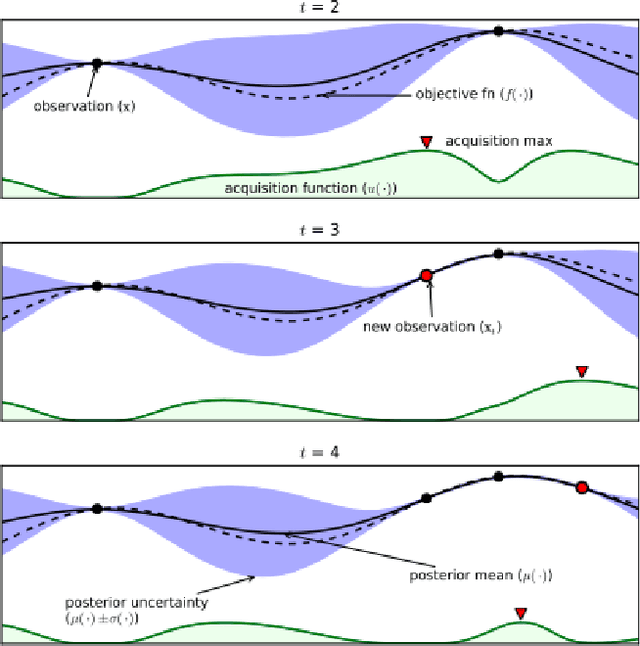

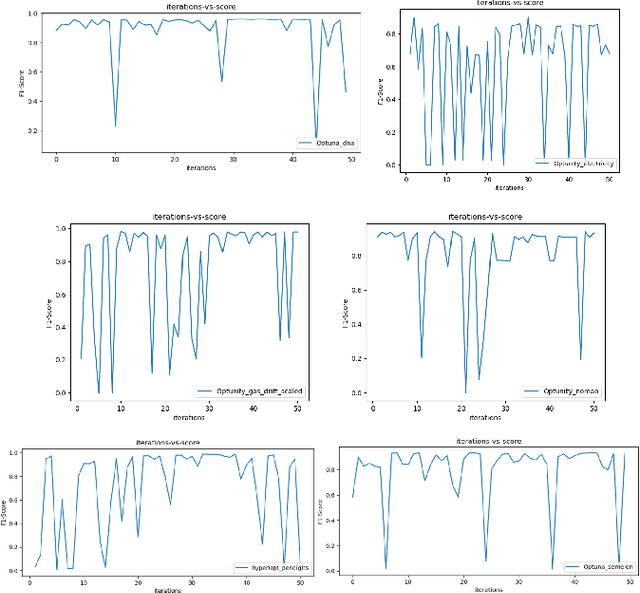

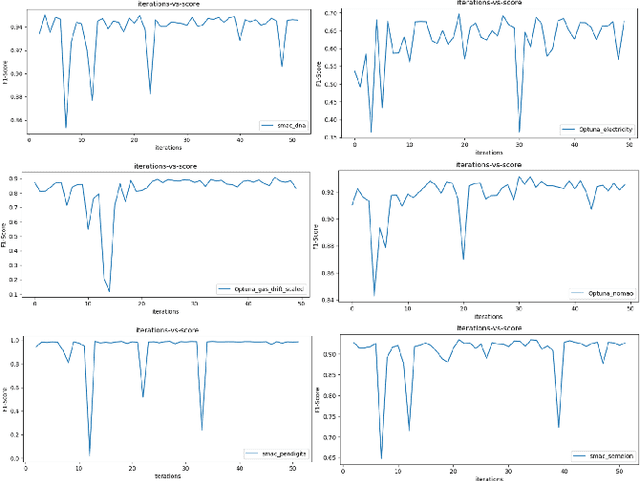

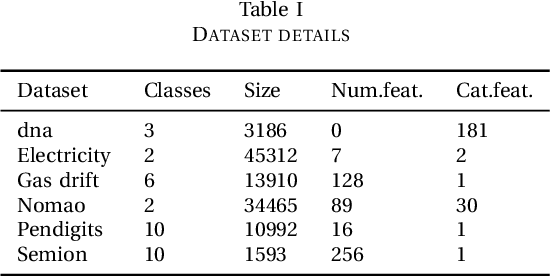

Abstract:Most of the machine learning models have associated hyper-parameters along with their parameters. While the algorithm gives the solution for parameters, its utility for model performance is highly dependent on the choice of hyperparameters. For a robust performance of a model, it is necessary to find out the right hyper-parameter combination. Hyper-parameter optimization (HPO) is a systematic process that helps in finding the right values for them. The conventional methods for this purpose are grid search and random search and both methods create issues in industrial-scale applications. Hence a set of strategies have been recently proposed based on Bayesian optimization and evolutionary algorithm principles that help in runtime issues in a production environment and robust performance. In this paper, we compare the performance of four python libraries, namely Optuna, Hyper-opt, Optunity, and sequential model-based algorithm configuration (SMAC) that has been proposed for hyper-parameter optimization. The performance of these tools is tested using two benchmarks. The first one is to solve a combined algorithm selection and hyper-parameter optimization (CASH) problem The second one is the NeurIPS black-box optimization challenge in which a multilayer perception (MLP) architecture has to be chosen from a set of related architecture constraints and hyper-parameters. The benchmarking is done with six real-world datasets. From the experiments, we found that Optuna has better performance for CASH problem and HyperOpt for MLP problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge