Abol Basher

Convolutional Neural Network-based Efficient Dense Point Cloud Generation using Unsigned Distance Fields

Mar 22, 2022

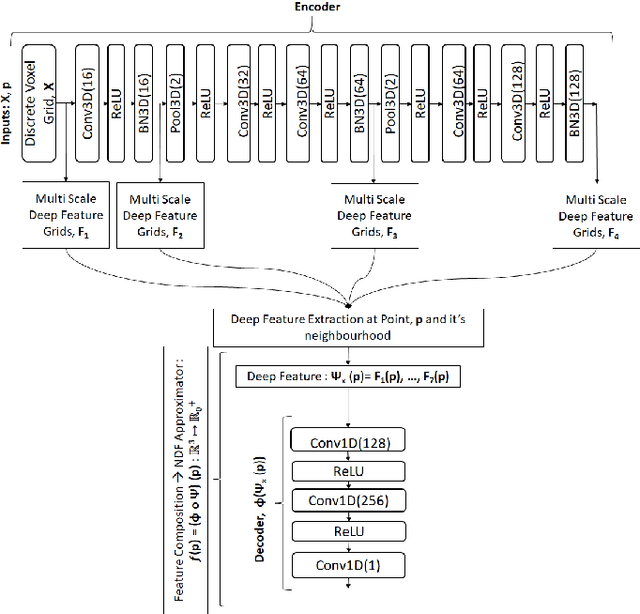

Abstract:Dense point cloud generation from a sparse or incomplete point cloud is a crucial and challenging problem in 3D computer vision and computer graphics. So far, the existing methods are either computationally too expensive, suffer from limited resolution, or both. In addition, some methods are strictly limited to watertight surfaces -- another major obstacle for a number of applications. To address these issues, we propose a lightweight Convolutional Neural Network that learns and predicts the unsigned distance field for arbitrary 3D shapes for dense point cloud generation using the recently emerged concept of implicit function learning. Experiments demonstrate that the proposed architecture achieves slightly better quality results than the state of the art with 87% less model parameters and 40% less GPU memory usage.

LightSAL: Lightweight Sign Agnostic Learning for Implicit Surface Representation

Mar 26, 2021

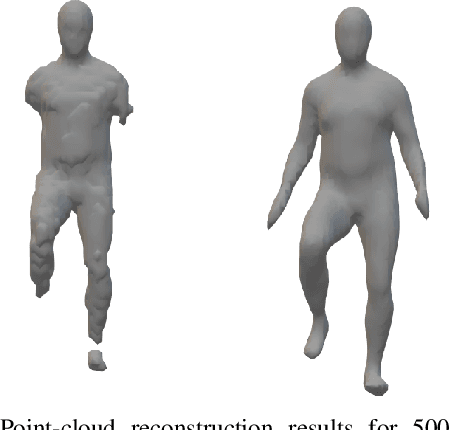

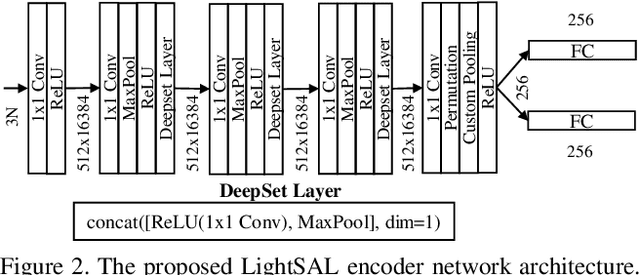

Abstract:Recently, several works have addressed modeling of 3D shapes using deep neural networks to learn implicit surface representations. Up to now, the majority of works have concentrated on reconstruction quality, paying little or no attention to model size or training time. This work proposes LightSAL, a novel deep convolutional architecture for learning 3D shapes; the proposed work concentrates on efficiency both in network training time and resulting model size. We build on the recent concept of Sign Agnostic Learning for training the proposed network, relying on signed distance fields, with unsigned distance as ground truth. In the experimental section of the paper, we demonstrate that the proposed architecture outperforms previous work in model size and number of required training iterations, while achieving equivalent accuracy. Experiments are based on the D-Faust dataset that contains 41k 3D scans of human shapes. The proposed model has been implemented in PyTorch.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge