Abhishek Unnam

Unsupervised Out-of-Distribution Dialect Detection with Mahalanobis Distance

Aug 09, 2023Abstract:Dialect classification is used in a variety of applications, such as machine translation and speech recognition, to improve the overall performance of the system. In a real-world scenario, a deployed dialect classification model can encounter anomalous inputs that differ from the training data distribution, also called out-of-distribution (OOD) samples. Those OOD samples can lead to unexpected outputs, as dialects of those samples are unseen during model training. Out-of-distribution detection is a new research area that has received little attention in the context of dialect classification. Towards this, we proposed a simple yet effective unsupervised Mahalanobis distance feature-based method to detect out-of-distribution samples. We utilize the latent embeddings from all intermediate layers of a wav2vec 2.0 transformer-based dialect classifier model for multi-task learning. Our proposed approach outperforms other state-of-the-art OOD detection methods significantly.

Grading video interviews with fairness considerations

Jul 02, 2020

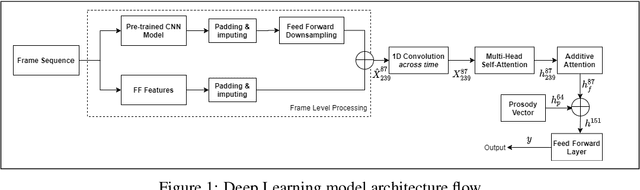

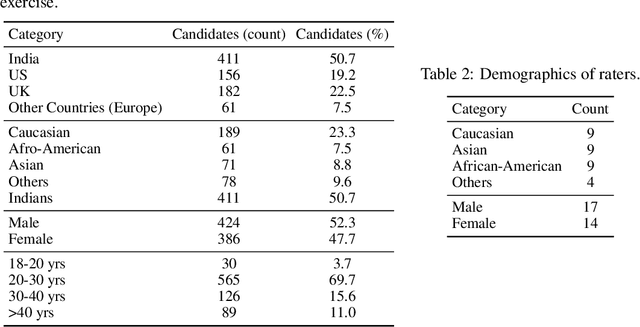

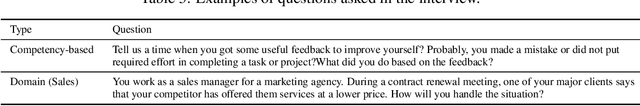

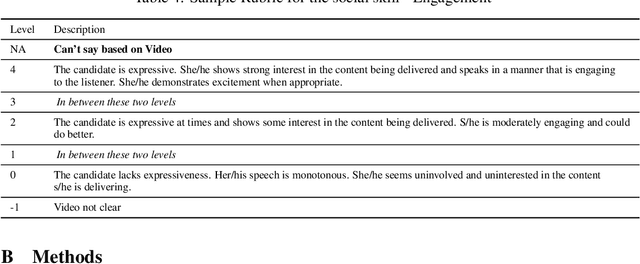

Abstract:There has been considerable interest in predicting human emotions and traits using facial images and videos. Lately, such work has come under criticism for poor labeling practices, inconclusive prediction results and fairness considerations. We present a careful methodology to automatically derive social skills of candidates based on their video response to interview questions. We, for the first time, include video data from multiple countries encompassing multiple ethnicities. Also, the videos were rated by individuals from multiple racial backgrounds, following several best practices, to achieve a consensus and unbiased measure of social skills. We develop two machine-learning models to predict social skills. The first model employs expert-guidance to use plausibly causal features. The second uses deep learning and depends solely on the empirical correlations present in the data. We compare errors of both these models, study the specificity of the models and make recommendations. We further analyze fairness by studying the errors of models by race and gender. We verify the usefulness of our models by determining how well they predict interview outcomes for candidates. Overall, the study provides strong support for using artificial intelligence for video interview scoring, while taking care of fairness and ethical considerations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge