Abdullah Salama

Pruning at a Glance: Global Neural Pruning for Model Compression

Dec 03, 2019

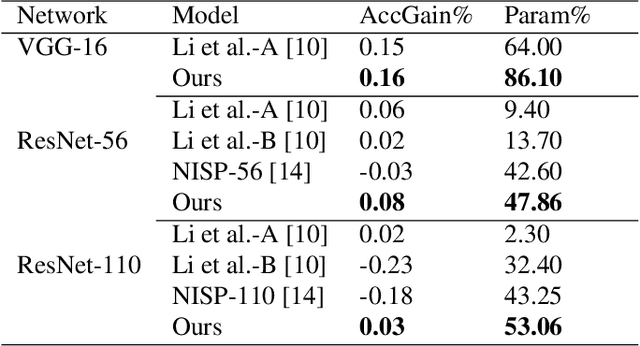

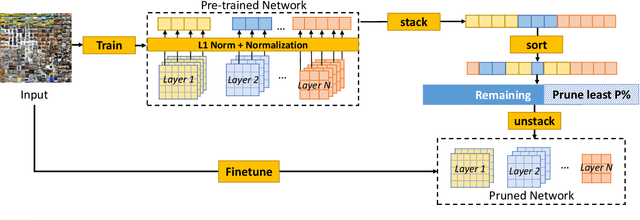

Abstract:Deep Learning models have become the dominant approach in several areas due to their high performance. Unfortunately, the size and hence computational requirements of operating such models can be considerably high. Therefore, this constitutes a limitation for deployment on memory and battery constrained devices such as mobile phones or embedded systems. To address these limitations, we propose a novel and simple pruning method that compresses neural networks by removing entire filters and neurons according to a global threshold across the network without any pre-calculation of layer sensitivity. The resulting model is compact, non-sparse, with the same accuracy as the non-compressed model, and most importantly requires no special infrastructure for deployment. We prove the viability of our method by producing highly compressed models, namely VGG-16, ResNet-56, and ResNet-110 respectively on CIFAR10 without losing any performance compared to the baseline, as well as ResNet-34 and ResNet-50 on ImageNet without a significant loss of accuracy. We also provide a well-retrained 30% compressed ResNet-50 that slightly surpasses the base model accuracy. Additionally, compressing more than 56% and 97% of AlexNet and LeNet-5 respectively. Interestingly, the resulted models' pruning patterns are highly similar to the other methods using layer sensitivity pre-calculation step. Our method does not only exhibit good performance but what is more also easy to implement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge