Åvald Åslaugson Sommervoll

Exploring reinforcement learning for incident response in autonomous military vehicles

Oct 28, 2024

Abstract:Unmanned vehicles able to conduct advanced operations without human intervention are being developed at a fast pace for many purposes. Not surprisingly, they are also expected to significantly change how military operations can be conducted. To leverage the potential of this new technology in a physically and logically contested environment, security risks are to be assessed and managed accordingly. Research on this topic points to autonomous cyber defence as one of the capabilities that may be needed to accelerate the adoption of these vehicles for military purposes. Here, we pursue this line of investigation by exploring reinforcement learning to train an agent that can autonomously respond to cyber attacks on unmanned vehicles in the context of a military operation. We first developed a simple simulation environment to quickly prototype and test some proof-of-concept agents for an initial evaluation. This agent was then applied to a more realistic simulation environment and finally deployed on an actual unmanned ground vehicle for even more realism. A key contribution of our work is demonstrating that reinforcement learning is a viable approach to train an agent that can be used for autonomous cyber defence on a real unmanned ground vehicle, even when trained in a simple simulation environment.

Simulating SQL Injection Vulnerability Exploitation Using Q-Learning Reinforcement Learning Agents

Jan 08, 2021

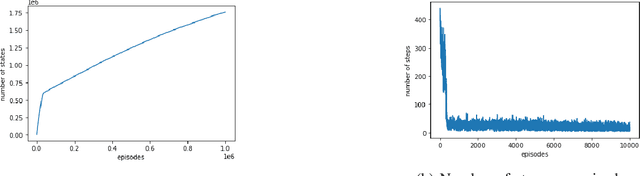

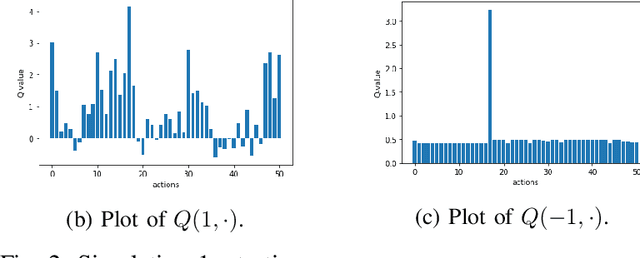

Abstract:In this paper, we propose a first formalization of the process of exploitation of SQL injection vulnerabilities. We consider a simplification of the dynamics of SQL injection attacks by casting this problem as a security capture-the-flag challenge. We model it as a Markov decision process, and we implement it as a reinforcement learning problem. We then deploy different reinforcement learning agents tasked with learning an effective policy to perform SQL injection; we design our training in such a way that the agent learns not just a specific strategy to solve an individual challenge but a more generic policy that may be applied to perform SQL injection attacks against any system instantiated randomly by our problem generator. We analyze the results in terms of the quality of the learned policy and in terms of convergence time as a function of the complexity of the challenge and the learning agent's complexity. Our work fits in the wider research on the development of intelligent agents for autonomous penetration testing and white-hat hacking, and our results aim to contribute to understanding the potential and the limits of reinforcement learning in a security environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge