Zero-Order One-Point Estimate with Distributed Stochastic Gradient-Tracking Technique

Paper and Code

Oct 11, 2022

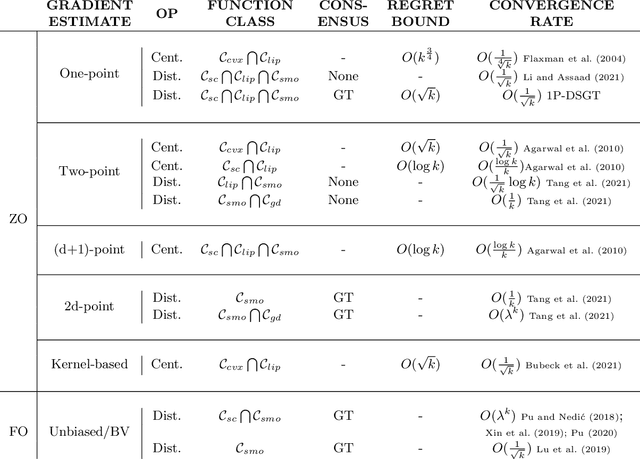

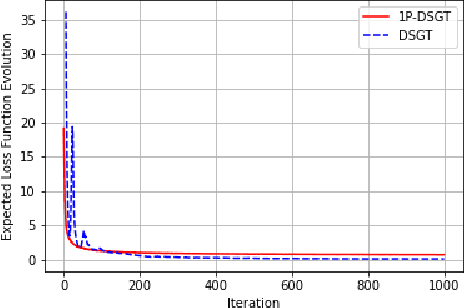

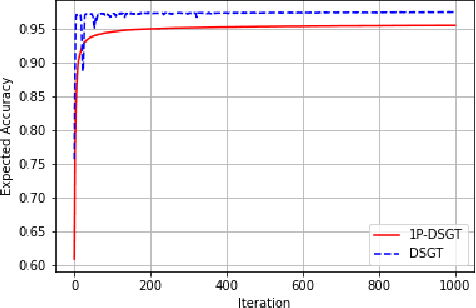

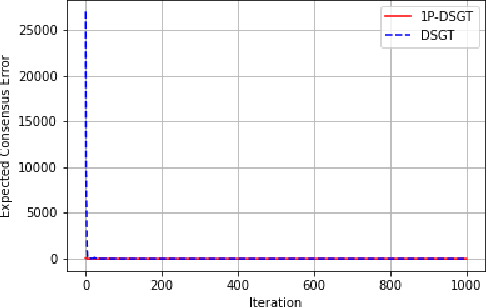

In this work, we consider a distributed multi-agent stochastic optimization problem, where each agent holds a local objective function that is smooth and convex, and that is subject to a stochastic process. The goal is for all agents to collaborate to find a common solution that optimizes the sum of these local functions. With the practical assumption that agents can only obtain noisy numerical function queries at exactly one point at a time, we extend the distributed stochastic gradient-tracking method to the bandit setting where we don't have an estimate of the gradient, and we introduce a zero-order (ZO) one-point estimate (1P-DSGT). We analyze the convergence of this novel technique for smooth and convex objectives using stochastic approximation tools, and we prove that it converges almost surely to the optimum. We then study the convergence rate for when the objectives are additionally strongly convex. We obtain a rate of $O(\frac{1}{\sqrt{k}})$ after a sufficient number of iterations $k > K_2$ which is usually optimal for techniques utilizing one-point estimators. We also provide a regret bound of $O(\sqrt{k})$, which is exceptionally good compared to the aforementioned techniques. We further illustrate the usefulness of the proposed technique using numerical experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge