X Modality Assisting RGBT Object Tracking

Paper and Code

Dec 27, 2023

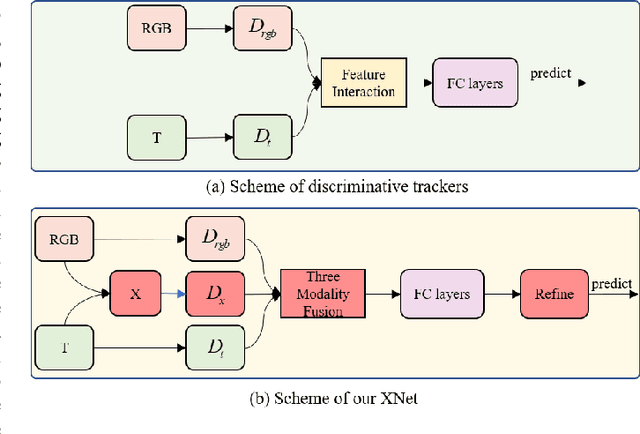

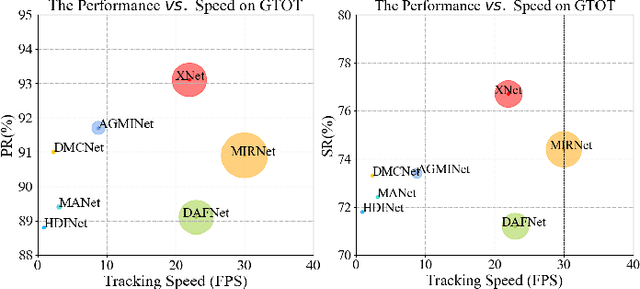

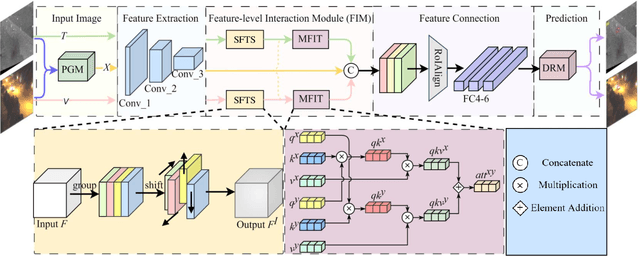

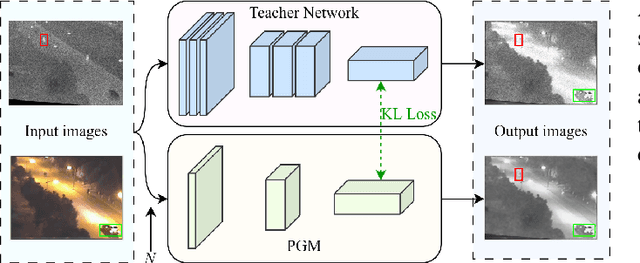

Learning robust multi-modal feature representations is critical for boosting tracking performance. To this end, we propose a novel X Modality Assisting Network (X-Net) to shed light on the impact of the fusion paradigm by decoupling the visual object tracking into three distinct levels, facilitating subsequent processing. Firstly, to tackle the feature learning hurdles stemming from significant differences between RGB and thermal modalities, a plug-and-play pixel-level generation module (PGM) is proposed based on self-knowledge distillation learning, which effectively generates X modality to bridge the gap between the dual patterns while reducing noise interference. Subsequently, to further achieve the optimal sample feature representation and facilitate cross-modal interactions, we propose a feature-level interaction module (FIM) that incorporates a mixed feature interaction transformer and a spatial-dimensional feature translation strategy. Ultimately, aiming at random drifting due to missing instance features, we propose a flexible online optimized strategy called the decision-level refinement module (DRM), which contains optical flow and refinement mechanisms. Experiments are conducted on three benchmarks to verify that the proposed X-Net outperforms state-of-the-art trackers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge