White Box Methods for Explanations of Convolutional Neural Networks in Image Classification Tasks

Paper and Code

Apr 06, 2021

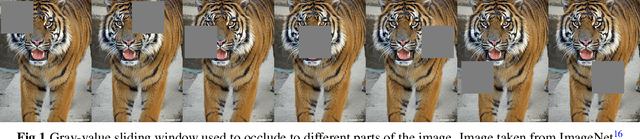

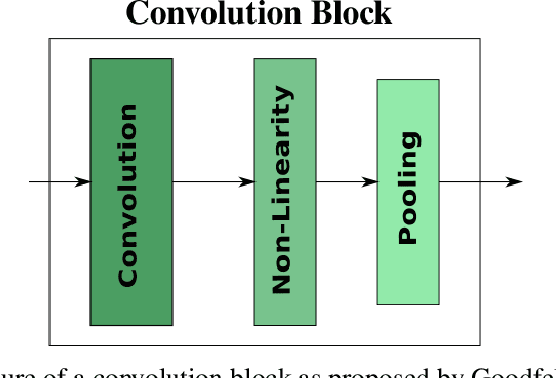

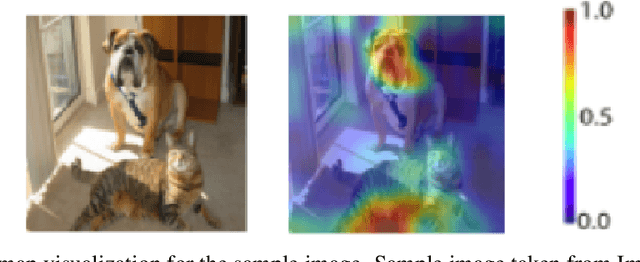

In recent years, deep learning has become prevalent to solve applications from multiple domains. Convolutional Neural Networks (CNNs) particularly have demonstrated state of the art performance for the task of image classification. However, the decisions made by these networks are not transparent and cannot be directly interpreted by a human. Several approaches have been proposed to explain to understand the reasoning behind a prediction made by a network. In this paper, we propose a topology of grouping these methods based on their assumptions and implementations. We focus primarily on white box methods that leverage the information of the internal architecture of a network to explain its decision. Given the task of image classification and a trained CNN, this work aims to provide a comprehensive and detailed overview of a set of methods that can be used to create explanation maps for a particular image, that assign an importance score to each pixel of the image based on its contribution to the decision of the network. We also propose a further classification of the white box methods based on their implementations to enable better comparisons and help researchers find methods best suited for different scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge