What is the best RNN-cell structure for forecasting each time series behavior?

Paper and Code

Mar 15, 2022

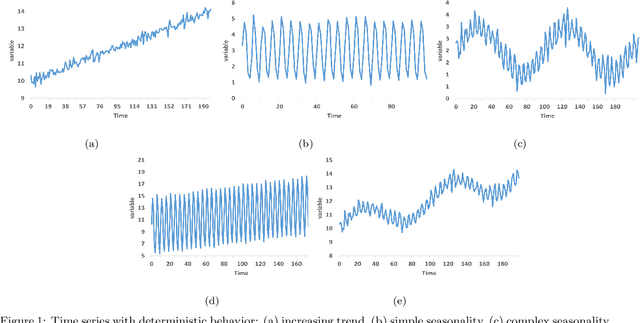

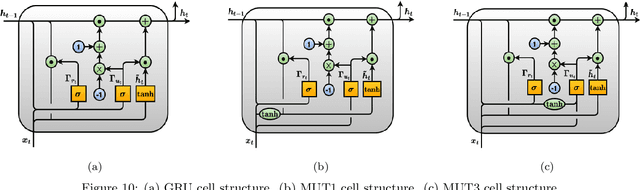

It is unquestionable that time series forecasting is of paramount importance in many fields. The most used machine learning models to address time series forecasting tasks are Recurrent Neural Networks (RNNs). Typically, those models are built using one of the three most popular cells, ELMAN, Long-Short Term Memory (LSTM), or Gated Recurrent Unit (GRU) cells, each cell has a different structure and implies a different computational cost. However, it is not clear why and when to use each RNN-cell structure. Actually, there is no comprehensive characterization of all the possible time series behaviors and no guidance on what RNN cell structure is the most suitable for each behavior. The objective of this study is two-fold: it presents a comprehensive taxonomy of all-time series behaviors (deterministic, random-walk, nonlinear, long-memory, and chaotic), and provides insights into the best RNN cell structure for each time series behavior. We conducted two experiments: (1) The first experiment evaluates and analyzes the role of each component in the LSTM-Vanilla cell by creating 11 variants based on one alteration in its basic architecture (removing, adding, or substituting one cell component). (2) The second experiment evaluates and analyzes the performance of 20 possible RNN-cell structures. Our results showed that the MGU-SLIM3 cell is the most recommended for deterministic and nonlinear behaviors, the MGU-SLIM2 cell is the most suitable for random-walk behavior, FB1 cell is advocated for long-memory behavior, and LSTM-SLIM1 for chaotic behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge