What do Deep Neural Networks Learn in Medical Images?

Paper and Code

Aug 01, 2022

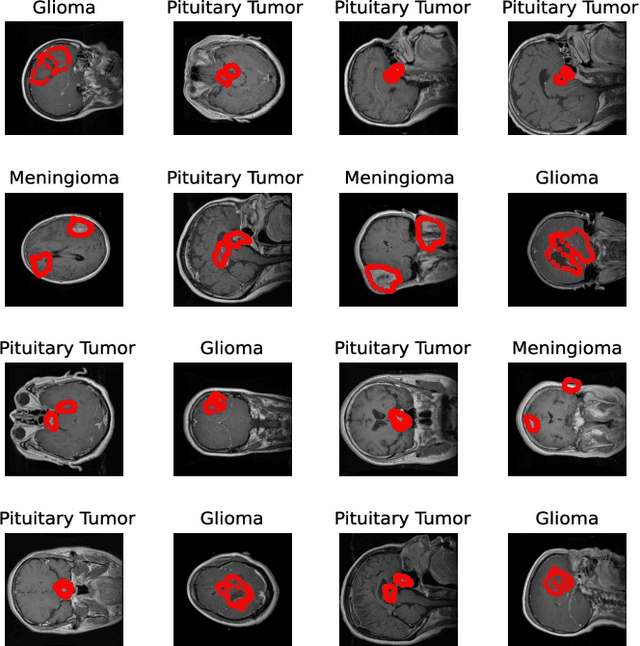

Deep learning is increasingly gaining rapid adoption in healthcare to help improve patient outcomes. This is more so in medical image analysis which requires extensive training to gain the requisite expertise to become a trusted practitioner. However, while deep learning techniques have continued to provide state-of-the-art predictive performance, one of the primary challenges that stands to hinder this progress in healthcare is the opaque nature of the inference mechanism of these models. So, attribution has a vital role in building confidence in stakeholders for the predictions made by deep learning models to inform clinical decisions. This work seeks to answer the question: what do deep neural network models learn in medical images? In that light, we present a novel attribution framework using adaptive path-based gradient integration techniques. Results show a promising direction of building trust in domain experts to improve healthcare outcomes by allowing them to understand the input-prediction correlative structures, discover new bio-markers, and reveal potential model biases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge