W-PoseNet: Dense Correspondence Regularized Pixel Pair Pose Regression

Paper and Code

Dec 26, 2019

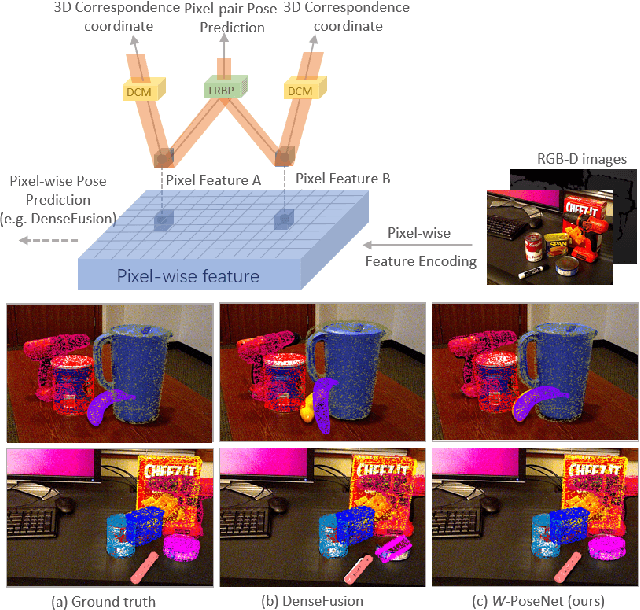

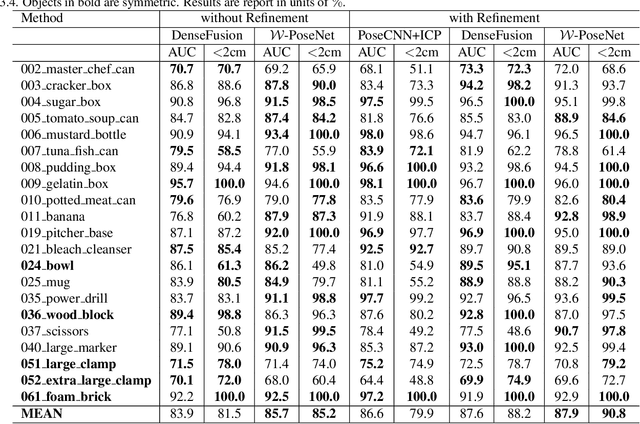

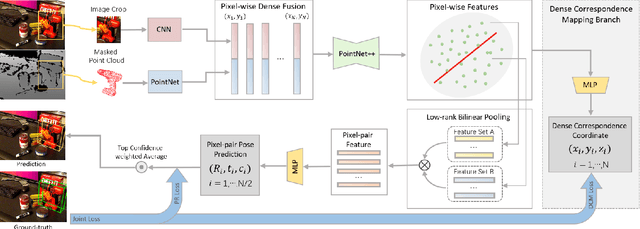

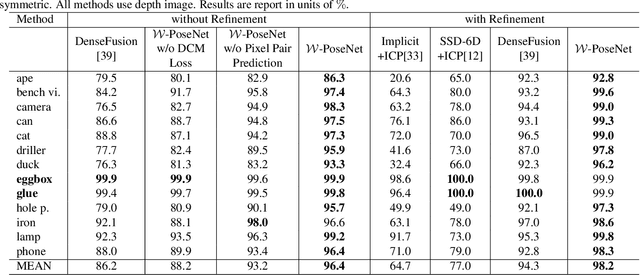

Solving 6D pose estimation is non-trivial to cope with intrinsic appearance and shape variation and severe inter-object occlusion, and is made more challenging in light of extrinsic large illumination changes and low quality of the acquired data under an uncontrolled environment. This paper introduces a novel pose estimation algorithm W-PoseNet, which densely regresses from input data to 6D pose and also 3D coordinates in model space. In other words, local features learned for pose regression in our deep network are regularized by explicitly learning pixel-wise correspondence mapping onto 3D pose-sensitive coordinates as an auxiliary task. Moreover, a sparse pair combination of pixel-wise features and soft voting on pixel-pair pose predictions are designed to improve robustness to inconsistent and sparse local features. Experiment results on the popular YCB-Video and LineMOD benchmarks show that the proposed W-PoseNet consistently achieves superior performance to the state-of-the-art algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge