Volumetric Data Fusion of External Depth and Onboard Proximity Data For Occluded Space Reduction

Paper and Code

Oct 21, 2021

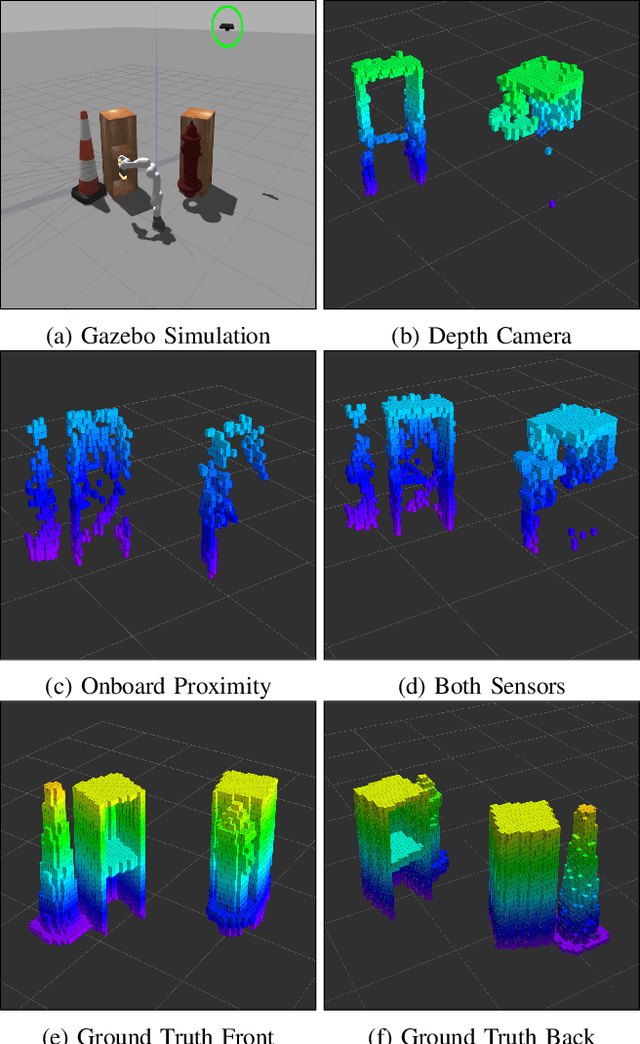

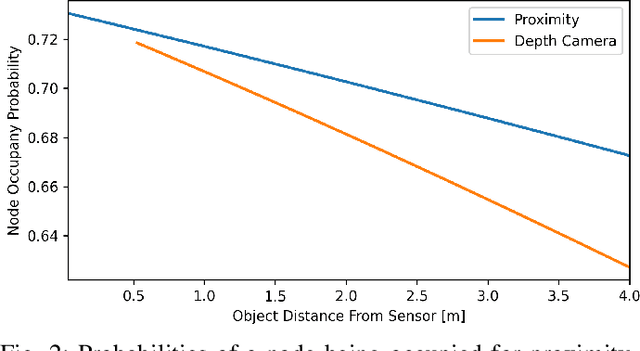

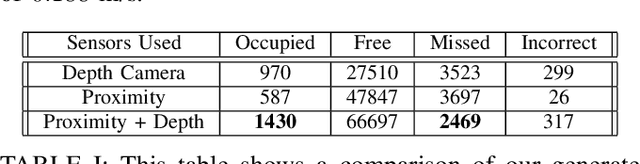

In this work, we present a method for a probabilistic fusion of external depth and onboard proximity data to form a volumetric 3-D map of a robot's environment. We extend the Octomap framework to update a representation of the area around the robot, dependent on each sensor's optimal range of operation. Areas otherwise occluded from an external view are sensed with onboard sensors to construct a more comprehensive map of a robot's nearby space. Our simulated results show that a more accurate map with less occlusions can be generated by fusing external depth and onboard proximity data.

* 3 pages, 2021 IEEE/RSJ International Conference on Intelligent Robots

and Systems (IROS 2021) 4th Workshop on Proximity Perception

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge