Visualizing Deep Neural Networks for Speech Recognition with Learned Topographic Filter Maps

Paper and Code

Dec 06, 2019

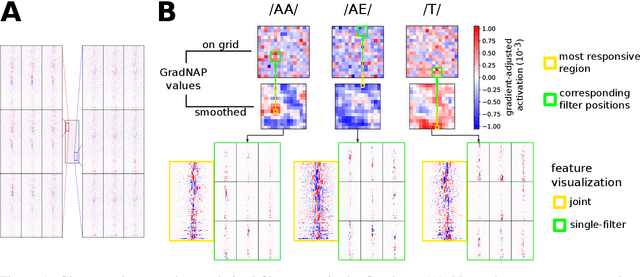

The uninformative ordering of artificial neurons in Deep Neural Networks complicates visualizing activations in deeper layers. This is one reason why the internal structure of such models is very unintuitive. In neuroscience, activity of real brains can be visualized by highlighting active regions. Inspired by those techniques, we train a convolutional speech recognition model, where filters are arranged in a 2D grid and neighboring filters are similar to each other. We show, how those topographic filter maps visualize artificial neuron activations more intuitively. Moreover, we investigate, whether this causes phoneme-responsive neurons to be grouped in certain regions of the topographic map.

* Accepted for 2019 ACL Workshop BlackboxNLP: Analyzing and

Interpreting Neural Networks for NLP

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge