Visual Representations of Physiological Signals for Fake Video Detection

Paper and Code

Jul 18, 2022

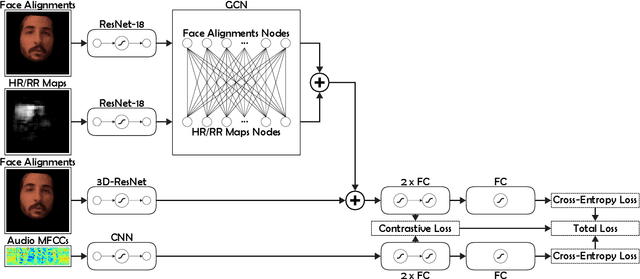

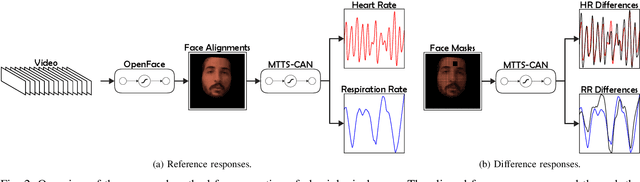

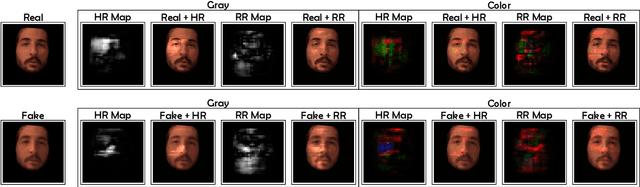

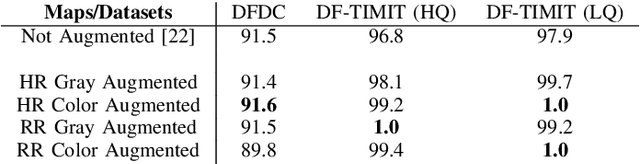

Realistic fake videos are a potential tool for spreading harmful misinformation given our increasing online presence and information intake. This paper presents a multimodal learning-based method for detection of real and fake videos. The method combines information from three modalities - audio, video, and physiology. We investigate two strategies for combining the video and physiology modalities, either by augmenting the video with information from the physiology or by novelly learning the fusion of those two modalities with a proposed Graph Convolutional Network architecture. Both strategies for combining the two modalities rely on a novel method for generation of visual representations of physiological signals. The detection of real and fake videos is then based on the dissimilarity between the audio and modified video modalities. The proposed method is evaluated on two benchmark datasets and the results show significant increase in detection performance compared to previous methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge