Variance Reduced Stochastic Proximal Algorithm for AUC Maximization

Paper and Code

Nov 08, 2019

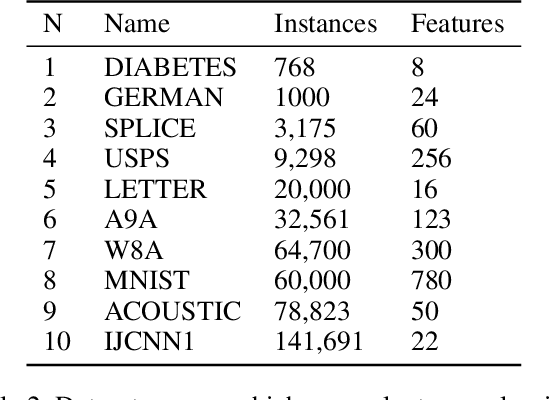

Stochastic Gradient Descent has been widely studied with classification accuracy as a performance measure. However, these stochastic algorithms cannot be directly used when non-decomposable pairwise performance measures are used such as Area under the ROC curve (AUC) which is a common performance metric when the classes are imbalanced. There have been several algorithms proposed for optimizing AUC as a performance metric, and one of the recent being a stochastic proximal gradient algorithm (SPAM). But the downside of the stochastic methods is that they suffer from high variance leading to slower convergence. To combat this issue, several variance reduced methods have been proposed with faster convergence guarantees than vanilla stochastic gradient descent. Again, these variance reduced methods are not directly applicable when non-decomposable performance measures are used. In this paper, we develop a Variance Reduced Stochastic Proximal algorithm for AUC Maximization (\textsc{VRSPAM}) and perform a theoretical analysis as well as empirical analysis to show that our algorithm converges faster than SPAM which is the previous state-of-the-art for the AUC maximization problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge