Using Deep Image Priors to Generate Counterfactual Explanations

Paper and Code

Oct 22, 2020

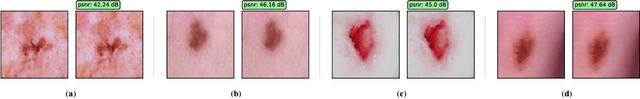

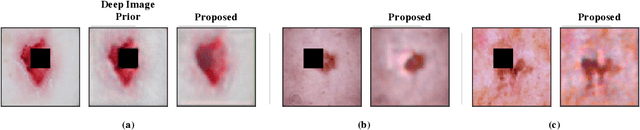

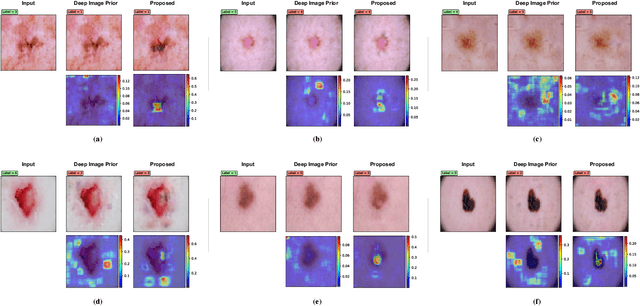

Through the use of carefully tailored convolutional neural network architectures, a deep image prior (DIP) can be used to obtain pre-images from latent representation encodings. Though DIP inversion has been known to be superior to conventional regularized inversion strategies such as total variation, such an over-parameterized generator is able to effectively reconstruct even images that are not in the original data distribution. This limitation makes it challenging to utilize such priors for tasks such as counterfactual reasoning, wherein the goal is to generate small, interpretable changes to an image that systematically leads to changes in the model prediction. To this end, we propose a novel regularization strategy based on an auxiliary loss estimator jointly trained with the predictor, which efficiently guides the prior to recover natural pre-images. Our empirical studies with a real-world ISIC skin lesion detection problem clearly evidence the effectiveness of the proposed approach in synthesizing meaningful counterfactuals. In comparison, we find that the standard DIP inversion often proposes visually imperceptible perturbations to irrelevant parts of the image, thus providing no additional insights into the model behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge