Unsupervised Solution Operator Learning for Mean-Field Games via Sampling-Invariant Parametrizations

Paper and Code

Jan 27, 2024

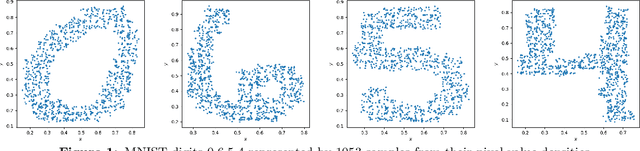

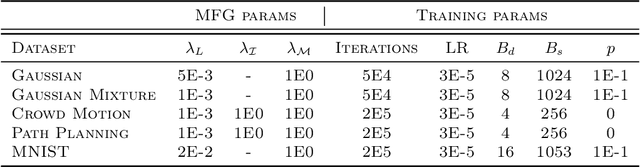

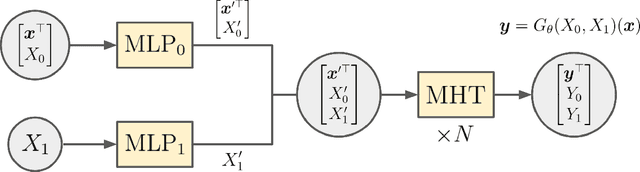

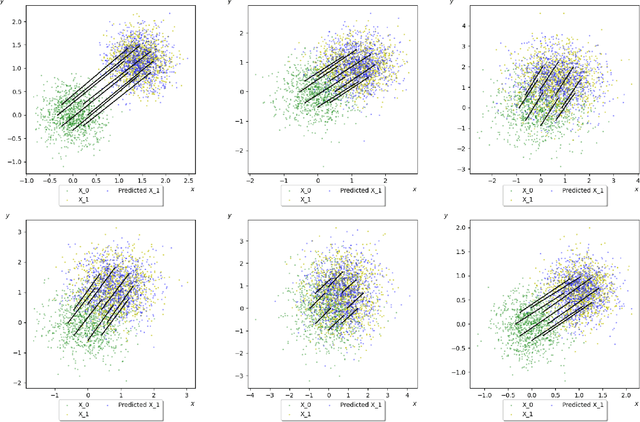

Recent advances in deep learning has witnessed many innovative frameworks that solve high dimensional mean-field games (MFG) accurately and efficiently. These methods, however, are restricted to solving single-instance MFG and demands extensive computational time per instance, limiting practicality. To overcome this, we develop a novel framework to learn the MFG solution operator. Our model takes a MFG instances as input and output their solutions with one forward pass. To ensure the proposed parametrization is well-suited for operator learning, we introduce and prove the notion of sampling invariance for our model, establishing its convergence to a continuous operator in the sampling limit. Our method features two key advantages. First, it is discretization-free, making it particularly suitable for learning operators of high-dimensional MFGs. Secondly, it can be trained without the need for access to supervised labels, significantly reducing the computational overhead associated with creating training datasets in existing operator learning methods. We test our framework on synthetic and realistic datasets with varying complexity and dimensionality to substantiate its robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge