Unsupervised Reinforcement Learning for Transferable Manipulation Skill Discovery

Paper and Code

Apr 29, 2022

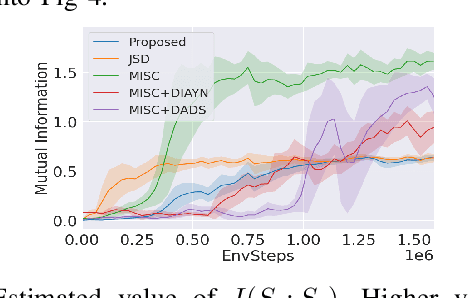

Current reinforcement learning (RL) in robotics often experiences difficulty in generalizing to new downstream tasks due to the innate task-specific training paradigm. To alleviate it, unsupervised RL, a framework that pre-trains the agent in a task-agnostic manner without access to the task-specific reward, leverages active exploration for distilling diverse experience into essential skills or reusable knowledge. For exploiting such benefits also in robotic manipulation, we propose an unsupervised method for transferable manipulation skill discovery that ties structured exploration toward interacting behavior and transferable skill learning. It not only enables the agent to learn interaction behavior, the key aspect of the robotic manipulation learning, without access to the environment reward, but also to generalize to arbitrary downstream manipulation tasks with the learned task-agnostic skills. Through comparative experiments, we show that our approach achieves the most diverse interacting behavior and significantly improves sample efficiency in downstream tasks including the extension to multi-object, multitask problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge