Unreliable Multi-Armed Bandits: A Novel Approach to Recommendation Systems

Paper and Code

Nov 14, 2019

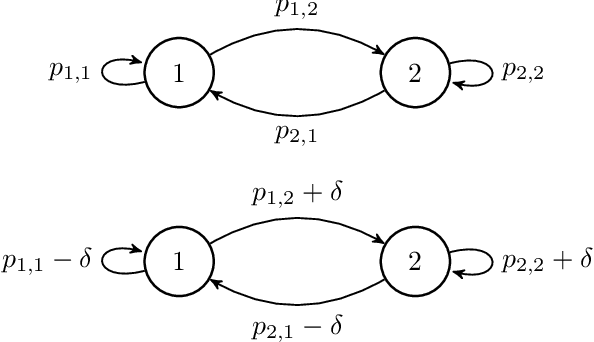

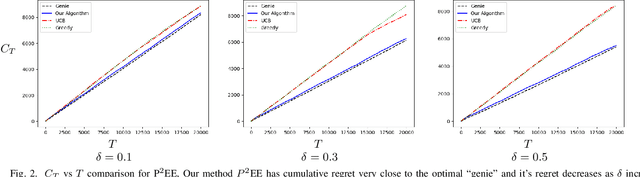

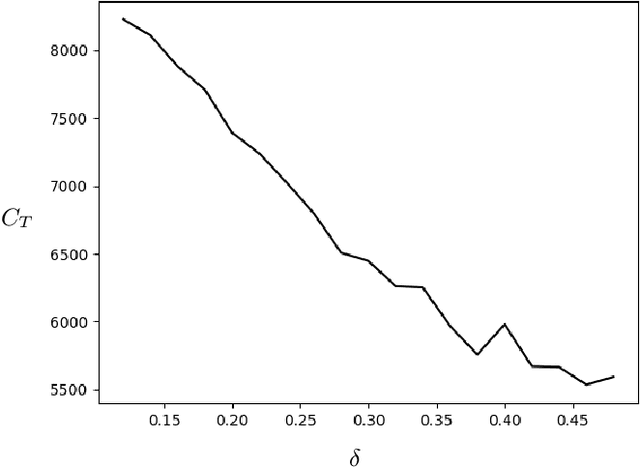

We use a novel modification of Multi-Armed Bandits to create a new model for recommendation systems. We model the recommendation system as a bandit seeking to maximize reward by pulling on arms with unknown rewards. The catch however is that this bandit can only access these arms through an unreliable intermediate that has some level of autonomy while choosing its arms. For example, in a streaming website the user has a lot of autonomy while choosing content they want to watch. The streaming sites can use targeted advertising as a means to bias opinions of these users. Here the streaming site is the bandit aiming to maximize reward and the user is the unreliable intermediate. We model the intermediate as accessing states via a Markov chain. The bandit is allowed to perturb this Markov chain. We prove fundamental theorems for this setting after which we show a close-to-optimal Explore-Commit algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge