Understanding the Interplay between Privacy and Robustness in Federated Learning

Paper and Code

Jun 13, 2021

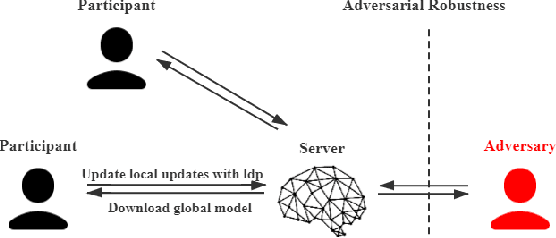

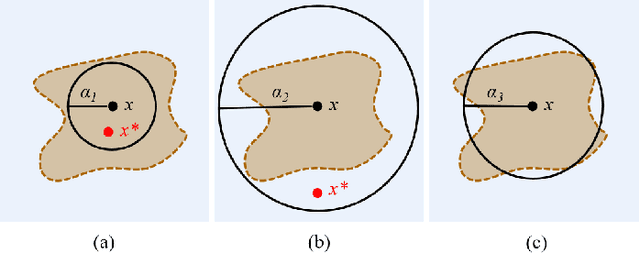

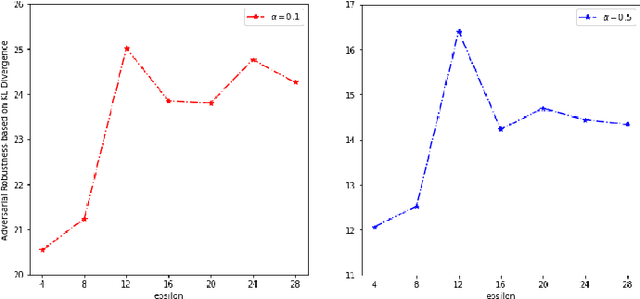

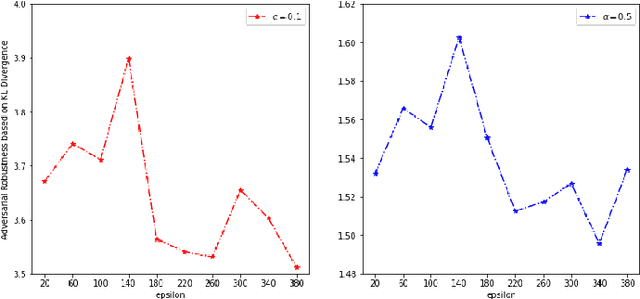

Federated Learning (FL) is emerging as a promising paradigm of privacy-preserving machine learning, which trains an algorithm across multiple clients without exchanging their data samples. Recent works highlighted several privacy and robustness weaknesses in FL and addressed these concerns using local differential privacy (LDP) and some well-studied methods used in conventional ML, separately. However, it is still not clear how LDP affects adversarial robustness in FL. To fill this gap, this work attempts to develop a comprehensive understanding of the effects of LDP on adversarial robustness in FL. Clarifying the interplay is significant since this is the first step towards a principled design of private and robust FL systems. We certify that local differential privacy has both positive and negative effects on adversarial robustness using theoretical analysis and empirical verification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge