Uncertainty Estimation for Heatmap-based Landmark Localization

Paper and Code

Mar 04, 2022

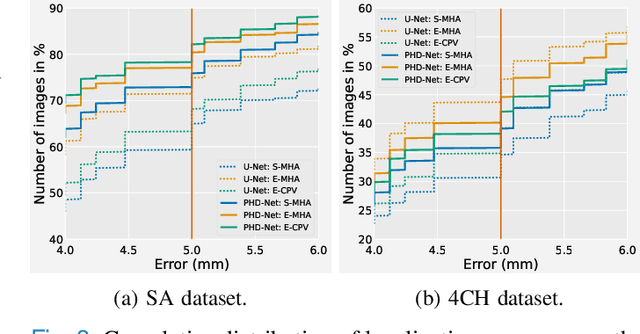

Automatic anatomical landmark localization has made great strides by leveraging deep learning methods in recent years. The ability to quantify the uncertainty of these predictions is a vital ingredient needed to see these methods adopted in clinical use, where it is imperative that erroneous predictions are caught and corrected. We propose Quantile Binning, a data-driven method to categorise predictions by uncertainty with estimated error bounds. This framework can be applied to any continuous uncertainty measure, allowing straightforward identification of the best subset of predictions with accompanying estimated error bounds. We facilitate easy comparison between uncertainty measures by constructing two evaluation metrics derived from Quantile Binning. We demonstrate this framework by comparing and contrasting three uncertainty measures (a baseline, the current gold standard, and a proposed method combining aspects of the two), across two datasets (one easy, one hard) and two heatmap-based landmark localization model paradigms (U-Net and patch-based). We conclude by illustrating how filtering out gross mispredictions caught in our Quantile Bins significantly improves the proportion of predictions under an acceptable error threshold, and offer recommendations on which uncertainty measure to use and how to use it.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge