Two approaches to inpainting microstructure with deep convolutional generative adversarial networks

Paper and Code

Oct 13, 2022

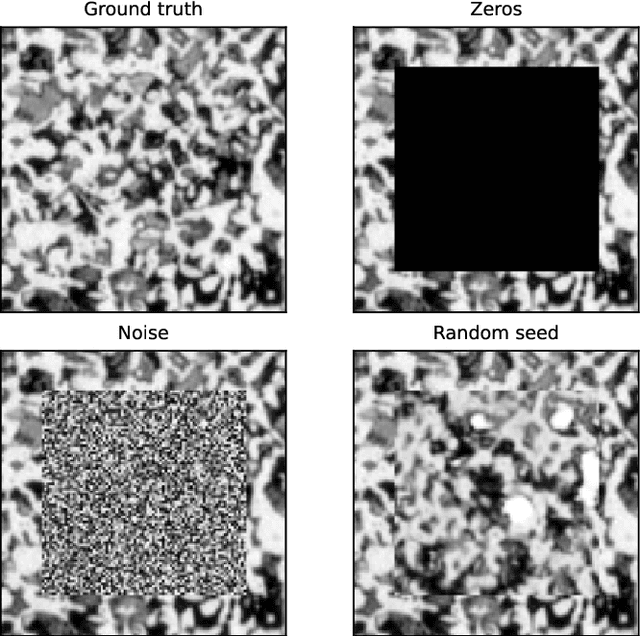

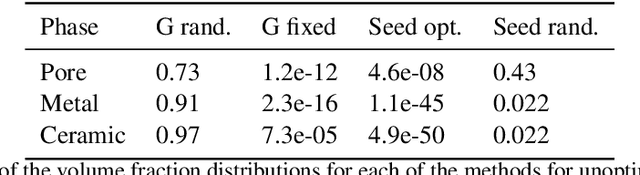

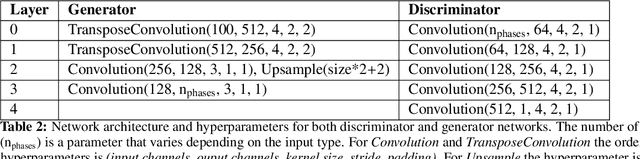

Imaging is critical to the characterisation of materials. However, even with careful sample preparation and microscope calibration, imaging techniques are often prone to defects and unwanted artefacts. This is particularly problematic for applications where the micrograph is to be used for simulation or feature analysis, as defects are likely to lead to inaccurate results. Microstructural inpainting is a method to alleviate this problem by replacing occluded regions with synthetic microstructure with matching boundaries. In this paper we introduce two methods that use generative adversarial networks to generate contiguous inpainted regions of arbitrary shape and size by learning the microstructural distribution from the unoccluded data. We find that one benefits from high speed and simplicity, whilst the other gives smoother boundaries at the inpainting border. We also outline the development of a graphical user interface that allows users to utilise these machine learning methods in a 'no-code' environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge