Trust as Extended Control: Active Inference and User Feedback During Human-Robot Collaboration

Paper and Code

Apr 22, 2021

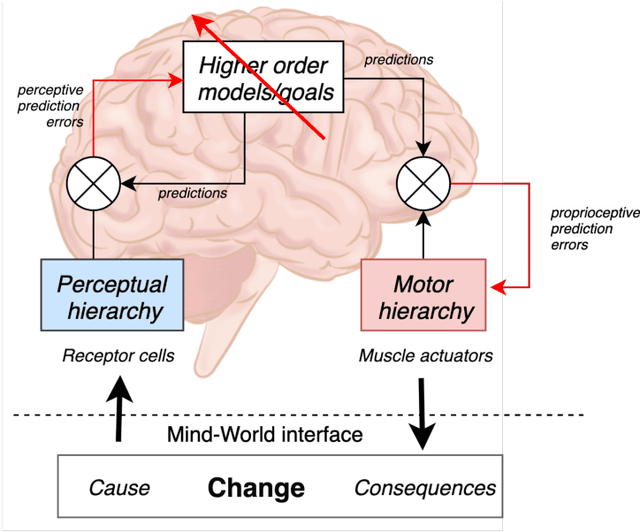

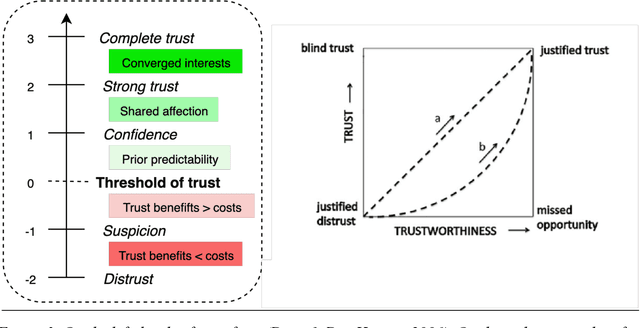

To interact seamlessly with robots, users must infer the causes of a robot's behavior and be confident about that inference. Hence, trust is a necessary condition for human-robot collaboration (HRC). Despite its crucial role, it is largely unknown how trust emerges, develops, and supports human interactions with nonhuman artefacts. Here, we review the literature on trust, human-robot interaction, human-robot collaboration, and human interaction at large. Early models of trust suggest that trust entails a trade-off between benevolence and competence, while studies of human-to-human interaction emphasize the role of shared behavior and mutual knowledge in the gradual building of trust. We then introduce a model of trust as an agent's best explanation for reliable sensory exchange with an extended motor plant or partner. This model is based on the cognitive neuroscience of active inference and suggests that, in the context of HRC, trust can be cast in terms of virtual control over an artificial agent. In this setting, interactive feedback becomes a necessary component of the trustor's perception-action cycle. The resulting model has important implications for understanding human-robot interaction and collaboration, as it allows the traditional determinants of human trust to be defined in terms of active inference, information exchange and empowerment. Furthermore, this model suggests that boredom and surprise may be used as markers for under and over-reliance on the system. Finally, we examine the role of shared behavior in the genesis of trust, especially in the context of dyadic collaboration, suggesting important consequences for the acceptability and design of human-robot collaborative systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge