Trident: Efficient 4PC Framework for Privacy Preserving Machine Learning

Paper and Code

Dec 05, 2019

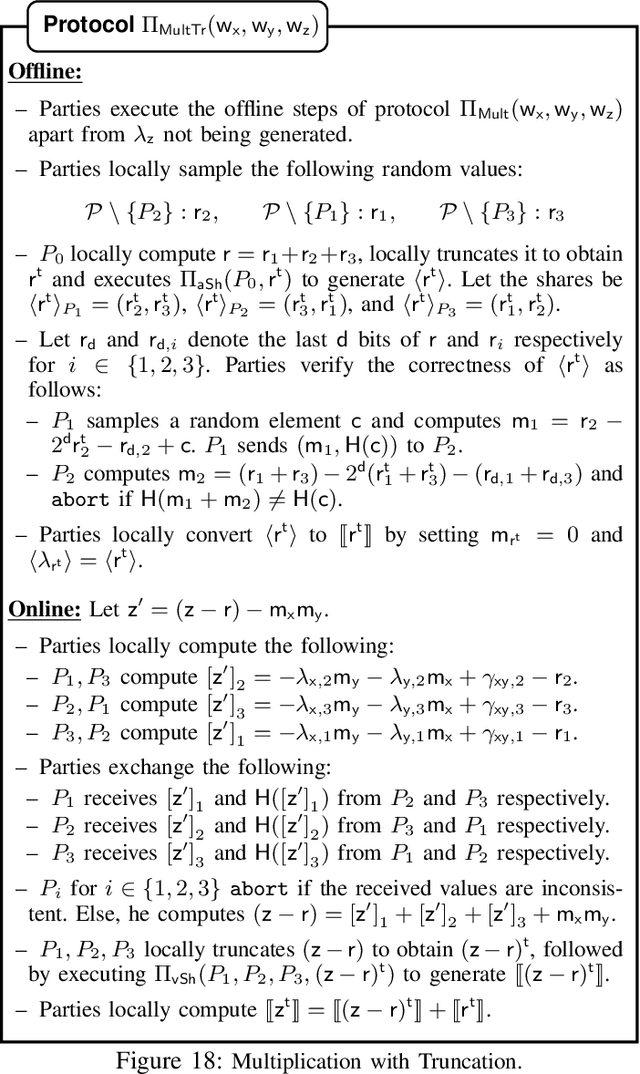

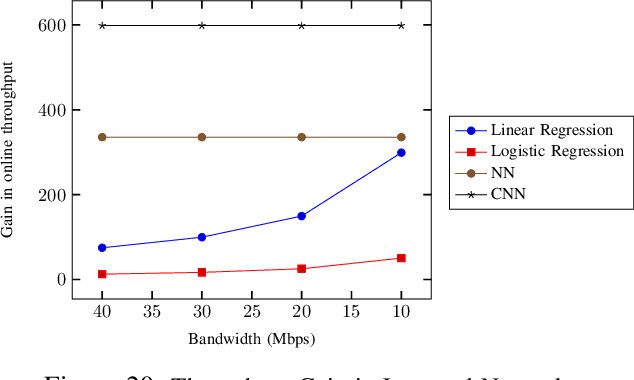

Machine learning has started to be deployed in fields such as healthcare and finance, which propelled the need for and growth of privacy-preserving machine learning (PPML). We propose an actively secure four-party protocol (4PC), and a framework for PPML, showcasing its applications on four of the most widely-known machine learning algorithms -- Linear Regression, Logistic Regression, Neural Networks, and Convolutional Neural Networks. Our 4PC protocol tolerating at most one malicious corruption is practically efficient as compared to the existing works. We use the protocol to build an efficient mixed-world framework (Trident) to switch between the Arithmetic, Boolean, and Garbled worlds. Our framework operates in the offline-online paradigm over rings and is instantiated in an outsourced setting for machine learning. Also, we propose conversions especially relevant to privacy-preserving machine learning. The highlights of our framework include using a minimal number of expensive circuits overall as compared to ABY3. This can be seen in our technique for truncation, which does not affect the online cost of multiplication and removes the need for any circuits in the offline phase. Our B2A conversion has an improvement of $\mathbf{7} \times$ in rounds and $\mathbf{18} \times$ in the communication complexity. In addition to these, all of the special conversions for machine learning, e.g. Secure Comparison, achieve constant round complexity. The practicality of our framework is argued through improvements in the benchmarking of the aforementioned algorithms when compared with ABY3. All the protocols are implemented over a 64-bit ring in both LAN and WAN settings. Our improvements go up to $\mathbf{187} \times$ for the training phase and $\mathbf{158} \times$ for the prediction phase when observed over LAN and WAN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge