Triangular Character Animation Sampling with Motion, Emotion, and Relation

Paper and Code

Mar 09, 2022

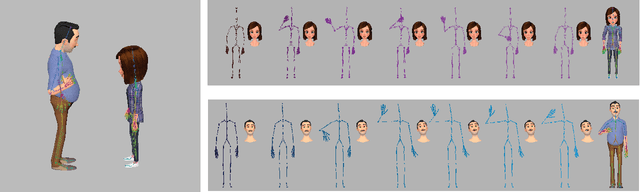

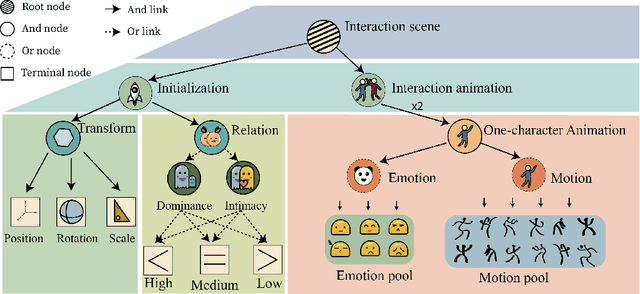

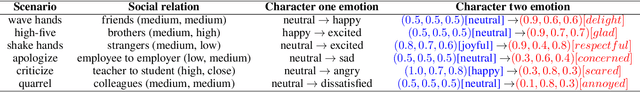

Dramatic progress has been made in animating individual characters. However, we still lack automatic control over activities between characters, especially those involving interactions. In this paper, we present a novel energy-based framework to sample and synthesize animations by associating the characters' body motions, facial expressions, and social relations. We propose a Spatial-Temporal And-Or graph (ST-AOG), a stochastic grammar model, to encode the contextual relationship between motion, emotion, and relation, forming a triangle in a conditional random field. We train our model from a labeled dataset of two-character interactions. Experiments demonstrate that our method can recognize the social relation between two characters and sample new scenes of vivid motion and emotion using Markov Chain Monte Carlo (MCMC) given the social relation. Thus, our method can provide animators with an automatic way to generate 3D character animations, help synthesize interactions between Non-Player Characters (NPCs), and enhance machine emotion intelligence (EQ) in virtual reality (VR).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge