Transforming Graphs for Enhanced Attribute-Based Clustering: An Innovative Graph Transformer Method

Paper and Code

Jun 21, 2023

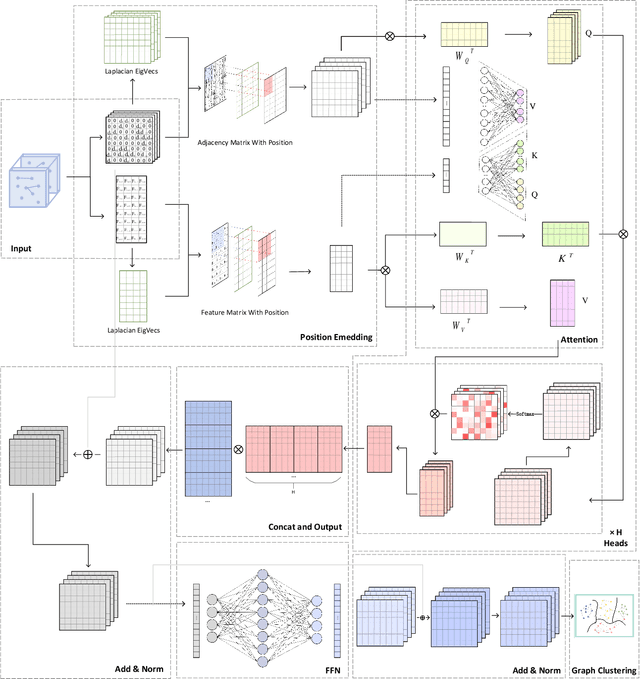

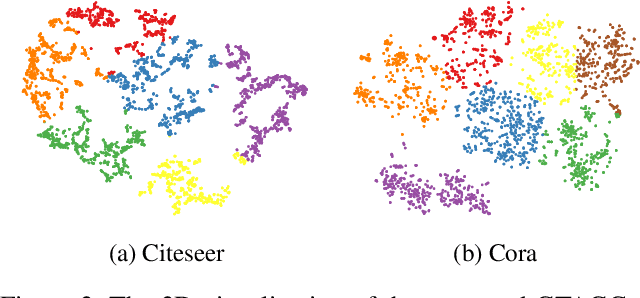

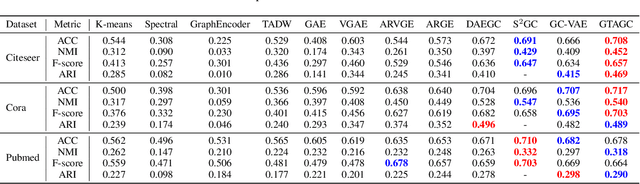

Graph Representation Learning (GRL) is an influential methodology, enabling a more profound understanding of graph-structured data and aiding graph clustering, a critical task across various domains. The recent incursion of attention mechanisms, originally an artifact of Natural Language Processing (NLP), into the realm of graph learning has spearheaded a notable shift in research trends. Consequently, Graph Attention Networks (GATs) and Graph Attention Auto-Encoders have emerged as preferred tools for graph clustering tasks. Yet, these methods primarily employ a local attention mechanism, thereby curbing their capacity to apprehend the intricate global dependencies between nodes within graphs. Addressing these impediments, this study introduces an innovative method known as the Graph Transformer Auto-Encoder for Graph Clustering (GTAGC). By melding the Graph Auto-Encoder with the Graph Transformer, GTAGC is adept at capturing global dependencies between nodes. This integration amplifies the graph representation and surmounts the constraints posed by the local attention mechanism. The architecture of GTAGC encompasses graph embedding, integration of the Graph Transformer within the autoencoder structure, and a clustering component. It strategically alternates between graph embedding and clustering, thereby tailoring the Graph Transformer for clustering tasks, whilst preserving the graph's global structural information. Through extensive experimentation on diverse benchmark datasets, GTAGC has exhibited superior performance against existing state-of-the-art graph clustering methodologies. This pioneering approach represents a novel contribution to the field of graph clustering, paving the way for promising avenues in future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge