Transfer Learning of Lexical Semantic Families for Argumentative Discourse Units Identification

Paper and Code

Sep 06, 2022

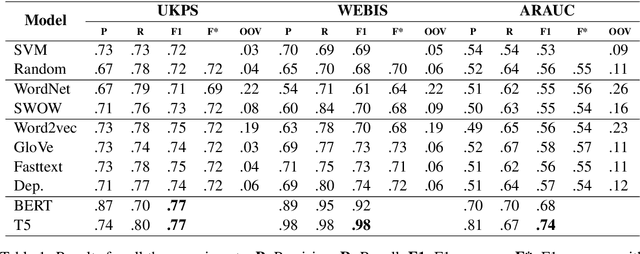

Argument mining tasks require an informed range of low to high complexity linguistic phenomena and commonsense knowledge. Previous work has shown that pre-trained language models are highly effective at encoding syntactic and semantic linguistic phenomena when applied with transfer learning techniques and built on different pre-training objectives. It remains an issue of how much the existing pre-trained language models encompass the complexity of argument mining tasks. We rely on experimentation to shed light on how language models obtained from different lexical semantic families leverage the performance of the identification of argumentative discourse units task. Experimental results show that transfer learning techniques are beneficial to the task and that current methods may be insufficient to leverage commonsense knowledge from different lexical semantic families.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge