Transfer Learning Gaussian Anomaly Detection by Fine-Tuning Representations

Paper and Code

Aug 09, 2021

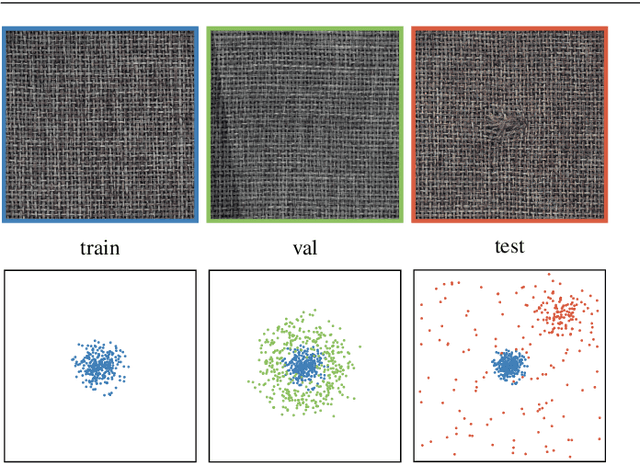

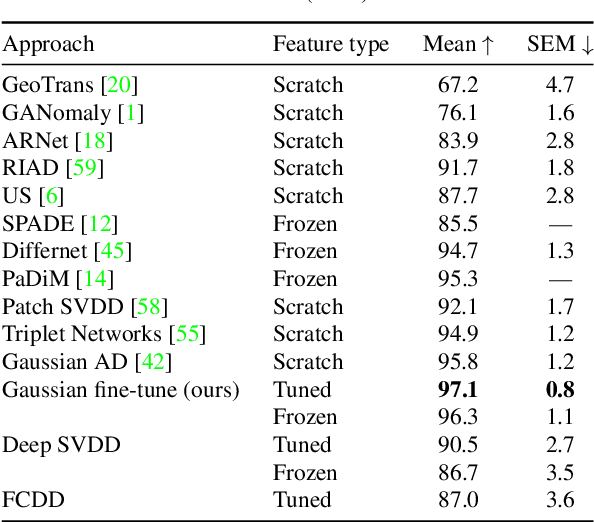

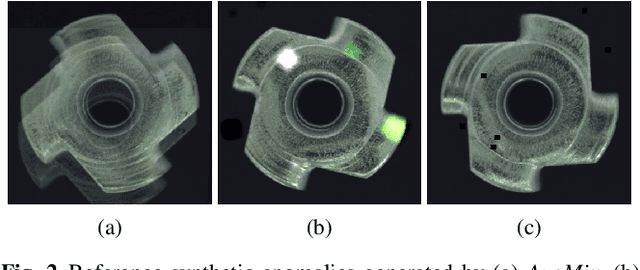

Current state-of-the-art Anomaly Detection (AD) methods exploit the powerful representations yielded by large-scale ImageNet training. However, catastrophic forgetting prevents the successful fine-tuning of pre-trained representations on new datasets in the semi/unsupervised setting, and representations are therefore commonly fixed. In our work, we propose a new method to fine-tune learned representations for AD in a transfer learning setting. Based on the linkage between generative and discriminative modeling, we induce a multivariate Gaussian distribution for the normal class, and use the Mahalanobis distance of normal images to the distribution as training objective. We additionally propose to use augmentations commonly employed for vicinal risk minimization in a validation scheme to detect onset of catastrophic forgetting. Extensive evaluations on the public MVTec AD dataset reveal that a new state of the art is achieved by our method in the AD task while simultaneously achieving AS performance comparable to prior state of the art. Further, ablation studies demonstrate the importance of the induced Gaussian distribution as well as the robustness of the proposed fine-tuning scheme with respect to the choice of augmentations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge