Training Dynamics of Deep Network Linear Regions

Paper and Code

Oct 19, 2023

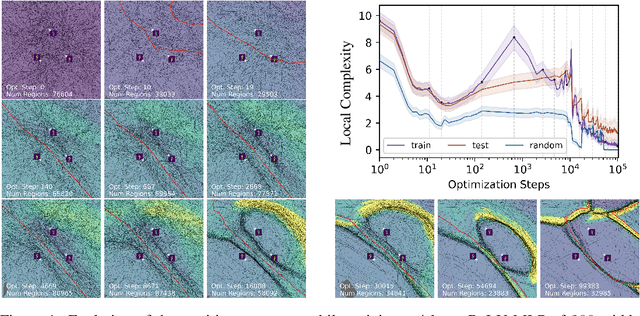

The study of Deep Network (DN) training dynamics has largely focused on the evolution of the loss function, evaluated on or around train and test set data points. In fact, many DN phenomenon were first introduced in literature with that respect, e.g., double descent, grokking. In this study, we look at the training dynamics of the input space partition or linear regions formed by continuous piecewise affine DNs, e.g., networks with (leaky)ReLU nonlinearities. First, we present a novel statistic that encompasses the local complexity (LC) of the DN based on the concentration of linear regions inside arbitrary dimensional neighborhoods around data points. We observe that during training, the LC around data points undergoes a number of phases, starting with a decreasing trend after initialization, followed by an ascent and ending with a final descending trend. Using exact visualization methods, we come across the perplexing observation that during the final LC descent phase of training, linear regions migrate away from training and test samples towards the decision boundary, making the DN input-output nearly linear everywhere else. We also observe that the different LC phases are closely related to the memorization and generalization performance of the DN, especially during grokking.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge