Towards Scheduling Federated Deep Learning using Meta-Gradients for Inter-Hospital Learning

Paper and Code

Jul 04, 2021

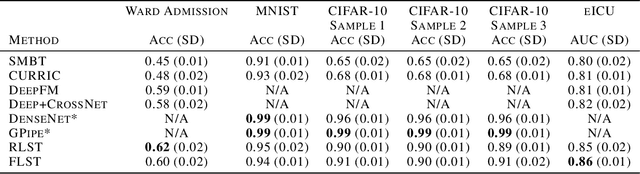

Given the abundance and ease of access of personal data today, individual privacy has become of paramount importance, particularly in the healthcare domain. In this work, we aim to utilise patient data extracted from multiple hospital data centres to train a machine learning model without sacrificing patient privacy. We develop a scheduling algorithm in conjunction with a student-teacher algorithm that is deployed in a federated manner. This allows a central model to learn from batches of data at each federal node. The teacher acts between data centres to update the main task (student) algorithm using the data that is stored in the various data centres. We show that the scheduler, trained using meta-gradients, can effectively organise training and as a result train a machine learning model on a diverse dataset without needing explicit access to the patient data. We achieve state-of-the-art performance and show how our method overcomes some of the problems faced in the federated learning such as node poisoning. We further show how the scheduler can be used as a mechanism for transfer learning, allowing different teachers to work together in training a student for state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge