Towards safe Bayesian optimization with Wiener kernel regression

Paper and Code

Nov 04, 2024

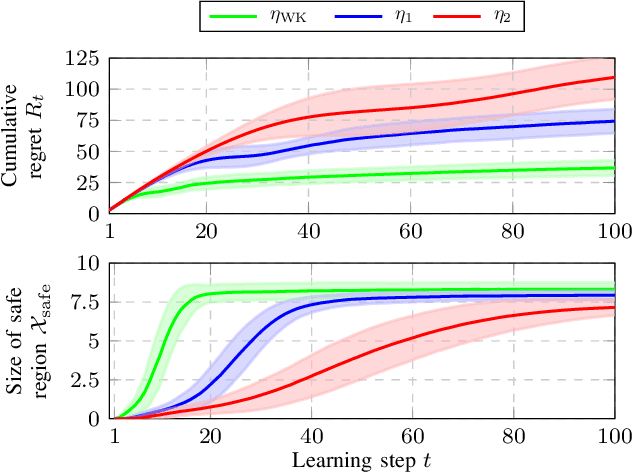

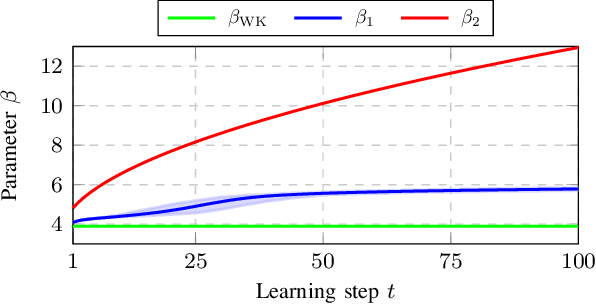

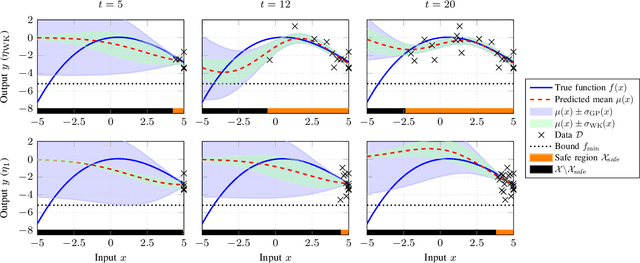

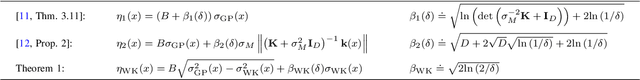

Bayesian Optimization (BO) is a data-driven strategy for minimizing/maximizing black-box functions based on probabilistic surrogate models. In the presence of safety constraints, the performance of BO crucially relies on tight probabilistic error bounds related to the uncertainty surrounding the surrogate model. For the case of Gaussian Process surrogates and Gaussian measurement noise, we present a novel error bound based on the recently proposed Wiener kernel regression. We prove that under rather mild assumptions, the proposed error bound is tighter than bounds previously documented in the literature which leads to enlarged safety regions. We draw upon a numerical example to demonstrate the efficacy of the proposed error bound in safe BO.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge