Towards Representation Learning with Tractable Probabilistic Models

Paper and Code

Aug 08, 2016

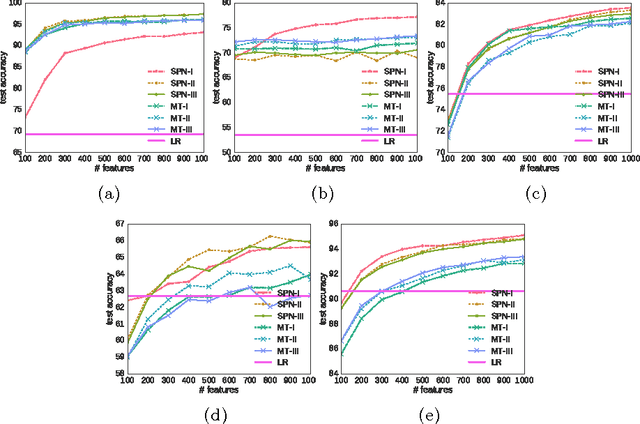

Probabilistic models learned as density estimators can be exploited in representation learning beside being toolboxes used to answer inference queries only. However, how to extract useful representations highly depends on the particular model involved. We argue that tractable inference, i.e. inference that can be computed in polynomial time, can enable general schemes to extract features from black box models. We plan to investigate how Tractable Probabilistic Models (TPMs) can be exploited to generate embeddings by random query evaluations. We devise two experimental designs to assess and compare different TPMs as feature extractors in an unsupervised representation learning framework. We show some experimental results on standard image datasets by applying such a method to Sum-Product Networks and Mixture of Trees as tractable models generating embeddings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge